Observability - OpenTelemetry Doc V1

架构

OpenTelemetry 也被称为 OTel,是一个供应商中立的、开源的可观测性框架, 可用于插桩、生成、采集和导出链路、 指标和日志等遥测数据

概述

专注于数据标准,不提供存储和可视化

- OpenTelemetry 是一个可观测性框架和工具包, 旨在创建和管理遥测数据,如链路、 指标和日志

- OpenTelemetry 是供应商和工具无关的,这意味着它可以与各种可观测性后端一起使用, 包括 Jaeger 和 Prometheus 这类开源工具以及商业化产品

- OpenTelemetry 不是像 Jaeger、Prometheus 或其他商业供应商那样的可观测性后端

- OpenTelemetry 专注于遥测数据的生成、采集、管理和导出

- OpenTelemetry 的一个主要目标是, 无论应用程序或系统采用何种编程语言、基础设施或运行时环境,你都可以轻松地将其仪表化

- 遥测数据的存储和可视化是有意留给其他工具处理

可观测性

- 可观测性是通过检查系统输出来理解系统内部状态的能力

- 在软件的背景下,这意味着能够通过检查遥测数据(包括链路、指标和日志)来理解系统的内部状态

- 要使系统可观测,必须对其进行仪表化

- 代码必须发出链路、指标或日志,然后,仪表化的数据必须发送到可观测性后端

OpenTelemetry

OpenTelemetry 满足可观测性的需求,并遵循两个关键原则

- 你所生成的数据归属于你自己,不会被供应商锁定

- 你只需要学习一套 API 和约定

主要组件

- 适用于所有组件的规范

- 定义遥测数据形状的标准协议

- 为常见遥测数据类型定义标准命名方案的语义约定

- 定义如何生成遥测数据的 API

- 实现规范、API 和遥测数据导出的语言 SDK

- 实现常见库和框架的仪表化的库生态系统

- 可自动生成遥测数据的自动仪表化组件,无需更改代码

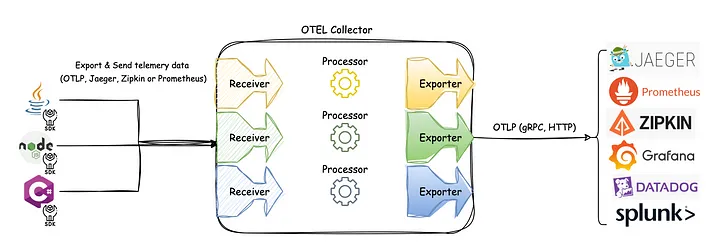

- OpenTelemetry Collector:接收、处理和导出遥测数据的代理

- 各种其他工具

- OpenTelemetry Operator for Kubernetes

- OpenTelemetry Helm Charts

- FaaS

可扩展性

OpenTelemetry 被设计为可扩展的 - 几乎每个层面都可以实现扩展

- 向 OpenTelemetry Collector 添加接收器以支持来自自定义源的遥测数据

- 将自定义仪表化库加载到 SDK 中

- 创建适用于特定用例的 SDK 或 Collector 的分发

- A distribution, not to be confused with a fork, is customized version of an OpenTelemetry component.

- 为尚不支持 OpenTelemetry 协议(OTLP)的自定义后端创建新的导出器

- 为非标准上下文传播格式创建自定义传播器

概念

入门

Observability

- 可观测性让你能够从外部理解一个系统,它允许你在不了解系统内部运作的情况下,对该系统提出问题

- 可观测性能帮你轻松排查和处理新出现的问题,也就是所谓的”未知的未知“

- 要对你的系统提出这些问题,你的应用程序必须进行适当的插桩

- 应用程序代码必须能够发出信号, 例如链路、 指标和日志

- 当开发人员不需要添加更多插桩就能排查问题时, 我们就可以说这个应用程序已经完成了适当的插桩,因为他们已经拥有了所需的所有信息

- OpenTelemetry 就是一种为应用程序代码进行插桩的机制,它的目的是帮助使系统变得可观测

可靠性 + 指标

Telemetry

- Telemetry 指的是系统及其行为发出的数据。这些数据可以是链路、指标和日志的形式

可靠性

- 服务是否在按用户期望的方式运行?

指标

- 指标是对一段时间内基础设施或应用程序的数值数据的汇总

- 例如:系统错误率、CPU 使用率和特定服务的请求率

SLI - Service Level Indicator - 技术指标

- 即服务水平指标(Service Level Indicator),代表对服务行为的衡量

- 一个好的 SLI 应该从用户的角度来衡量你的服务 - 网页加载的速度

SLO - Service Level Objective - 业务价值

- 服务水平目标(Service Level Objective)是一种向组织内部或其他团队传达服务可靠性的方法

- SLO 通过将具体的技术指标(SLI)与业务目标关联起来,使技术性能变得对业务有意义

- 网站页面加载时间(SLI)必须在3秒内,以确保良好的用户体验和提高转化率(业务价值)

分布式链路

- 分布式链路让你能够观察请求如何在复杂的分布式系统中传播

- 提高了应用程序或系统健康状况的可见性,并让你能够调试那些难以在本地重现的行为

- 对于分布式系统来说,分布式链路是必不可少的,因为这些系统通常存在不确定性问题,或者过于复杂而无法在本地重现

日志

- 日志是由服务或其他组件发出的带时间戳的消息

- 与链路不同,它们不一定与特定的用户请求或事务相关联

- 日志虽然有用,但仅靠它们来追踪代码执行还不够,因为日志通常缺乏上下文信息,比如它们是从哪里被调用的

- 当日志作为 span(跨度)的一部分,或者与 trace(链路)和 span 关联起来时,它们的价值就会大大增加

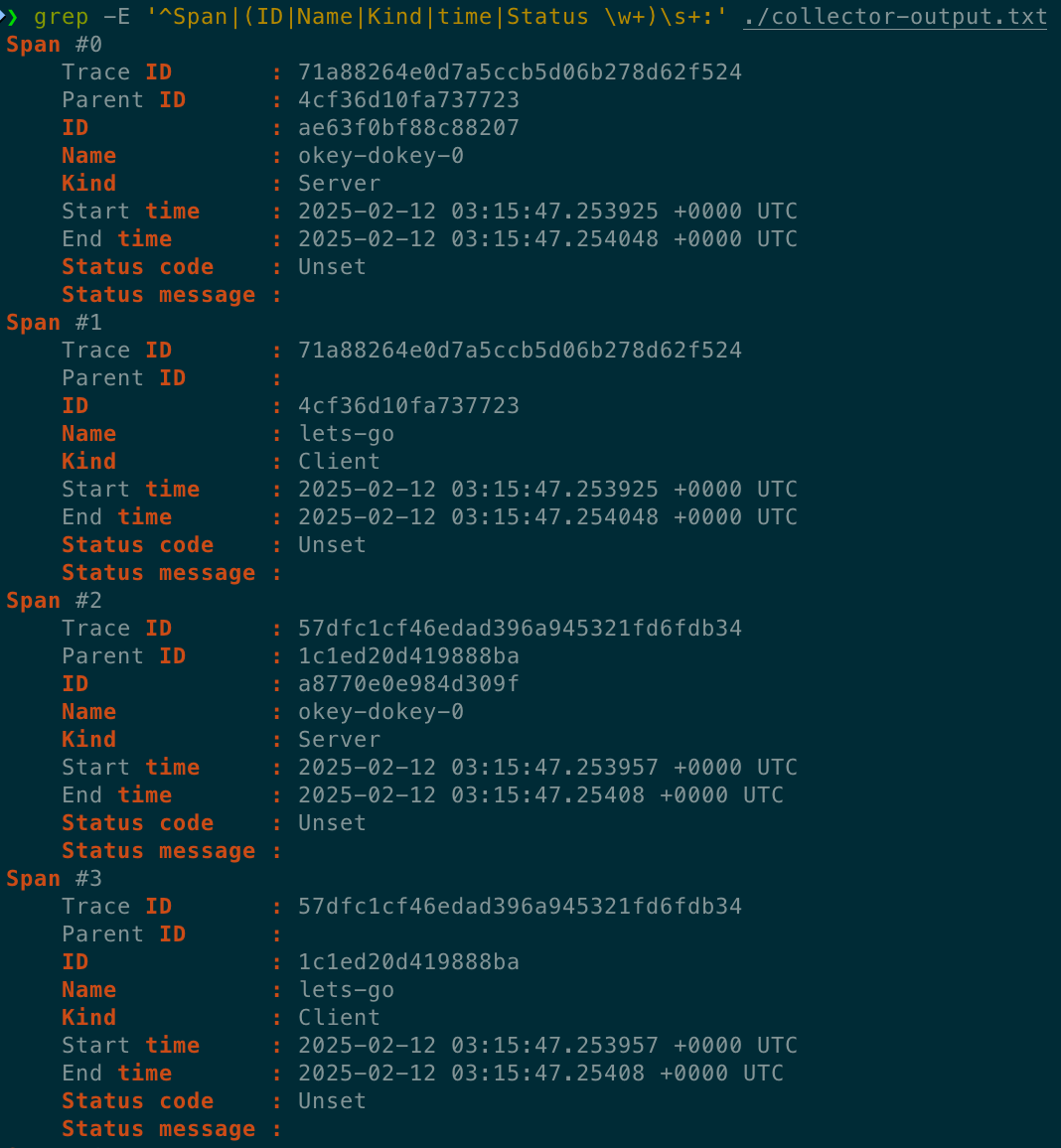

Spans

- Span(跨度)是分布式链路中的基本构建块,它代表了一个具体的操作或工作单元

- 每个 span 都记录了请求中的特定动作,帮助我们了解操作执行过程中发生的详细情况

- 一个 span 包含名称、时间相关的数据、结构化的日志消息,以及其他元数据(属性),这些信息共同描绘了该操作的完整画面

- 属性 - 附加在 span 上的额外信息 - 提供了更多关于操作上下文的细节

分布式链路

- 记录了请求(无论是来自应用程序还是终端用户)在多服务架构(如微服务和无服务器应用)中传播的路径

- 一个 Trace 由一个或多个 Span 组成

- 第一个 Span 被称为根 Span,它代表了一个请求从开始到结束的全过程

- 根 Span 下的子 Span 则提供了请求过程中更详细的上下文信息(或者说,构成了请求的各个步骤)

- 如果没有链路,在分布式系统中找出性能问题的根源将会非常具有挑战性

- 链路通过分解请求在分布式系统中的流转过程,使得调试和理解分布式系统变得不那么令人生畏

许多可观测性后端会将链路可视化为瀑布图 - 清晰地展示了根 span 与其子 span 之间的父子关系 - 表现为嵌套结构

上下文传播

- 通过上下文传播,信号可以相互关联, 无论它们是在何处生成的

- 尽管它不仅限于链路追踪,但它允许 trace 跨进程和网络边界任意分布的服务构建相关系统的关联信息

上下文

上下文是一个对象,它包含发送和接收服务 (或执行单元) 用于将一个信号与另一个信号关联起来的信息

如果服务 A 调用服务 B

那么服务 A 中 ID 在上下文中的 span 将用作服务 B 中创建的下一个 span 的父 span

上下文中的 trace ID 也将用于服务 B 中创建的下一个 span, 这表示该 span 与服务 A 中的 span 属于同一 trace 的一部分

传播

- 传播是上下文在服务和进程之间移动的机制

- 它序列化或反序列化上下文对象并提供要从一个服务传播到另一个服务的相关信息

- 传播通常由检测库处理并且对用户是透明的,但如果你需要手动传播上下文,则可以使用传播 API

- OpenTelemetry 维护着几个官方传播器,默认传播器使用 W3C Trace Context规范指定的标头

信号

- OpenTelemetry 的目的是收集、处理和导出信号

- 信号是系统输出,描述了操作系统和平台上运行的应用程序的底层活动

- 信号可以是你希望在特定时间点测量的某项指标,如温度或内存使用率, 也可以是贯穿分布式系统组件的事件,你希望对其进行跟踪

- 可以将不同的信号组合在一起,从不同角度观察同一种技术的内部运作方式

- 目前,OpenTelemetry 支持以下类型的信号 - Traces + Metrics + Logs + Baggage

- 事件是一种特定类型的日志,而 profiles 正在由 Profiling 工作组开发

Traces

The path of a request through your application.

- Traces give us the big picture of what happens when a request is made to an application.

- Whether your application is a monolith with a single database or a sophisticated mesh of services

- traces are essential to understanding the full “path” a request takes in your application.

hello span

1 | { |

- This is the root span, denoting the beginning and end of the entire operation.

- Note that it has a trace_id field indicating the trace, but has no parent_id. That’s how you know it’s the root span.

hello-greetings span

1 | { |

- This span encapsulates specific tasks, like saying greetings, and its parent is the hello span.

- Note that it shares the same trace_id as the root span, indicating it’s a part of the same trace.

- Additionally, it has a parent_id that matches the span_id of the hello span.

hello-salutations span

1 | { |

- This span represents the third operation in this trace and, like the previous one, it’s a child of the hello span.

- That also makes it a sibling of the hello-greetings span.

Summary

- These three blocks of JSON all share the same trace_id, and the parent_id field represents a hierarchy. That makes it a Trace!

- Another thing you’ll note is that each Span looks like a structured log. That’s because it kind of is!

- One way to think of Traces is that they’re a collection of structured logs with context, correlation, hierarchy, and more baked in.

- However, these “structured logs” can come from different processes, services, VMs, data centers, and so on.

- This is what allows tracing to represent an end-to-end view of any system.

To understand how tracing in OpenTelemetry works, let’s look at a list of components that will play a part in instrumenting our code.

Tracer Provider

- A Tracer Provider (sometimes called TracerProvider) is a factory for Tracers.

- In most applications, a Tracer Provider is initialized once and its lifecycle matches the application’s lifecycle.

- Tracer Provider initialization also includes Resource and Exporter initialization.

- It is typically the first step in tracing with OpenTelemetry.

- In some language SDKs, a global Tracer Provider is already initialized for you.

Tracer

- A Tracer creates spans containing more information about what is happening for a given operation, such as a request in a service.

- Tracers are created from Tracer Providers.

Trace Exporters

- Trace Exporters send traces to a consumer.

- This consumer can be standard output, the OpenTelemetry Collector, or any open source or vendor backend of your choice.

Context Propagation

- Context Propagation is the core concept that enables Distributed Tracing.

- With Context Propagation, Spans can be correlated with each other and assembled into a trace, regardless of where Spans are generated.

Spans

- A span represents a unit of work or operation.

- Spans are the building blocks of Traces.

- In OpenTelemetry, they include the following information

- Name

- Parent span ID (empty for root spans)

- Start and End Timestamps

- Span Context

- Attributes

- Span Events

- Span Links

- Span Status

Sample span

1 | { |

- Spans can be nested, as is implied by the presence of a parent span ID: child spans represent sub-operations.

- This allows spans to more accurately capture the work done in an application.

Span Context

- Span context is an immutable object on every span that contains the following

- The Trace ID representing the trace that the span is a part of

- The span’s Span ID

- Trace Flags, a binary encoding containing information about the trace

- Trace State, a list of key-value pairs that can carry vendor-specific trace information

- Span context is the part of a span that is serialized and propagated alongside Distributed Context and Baggage.

- Because Span Context contains the Trace ID, it is used when creating Span Links.

Attributes

- Attributes are key-value pairs that contain metadata that you can use to annotate a Span to carry information about the operation it is tracking.

- For example, if a span tracks an operation that adds an item to a user’s shopping cart in an eCommerce system

- you can capture the user’s ID, the ID of the item to add to the cart, and the cart ID.

- You can add attributes to spans during or after span creation.

- Prefer adding attributes at span creation to make the attributes available to SDK sampling.

- If you have to add a value after span creation, update the span with the value.

- Attributes have the following rules that each language SDK implements

- Keys must be non-null string values

- Values must be a non-null string, boolean, floating point value, integer, or an array of these values

- Additionally, there are Semantic Attributes, which are known naming conventions for metadata that is typically present in common operations.

- It’s helpful to use semantic attribute naming wherever possible so that common kinds of metadata are standardized across systems.

Span Events

- A Span Event can be thought of as a structured log message (or annotation) on a Span

- typically used to denote a meaningful, singular point in time during the Span’s duration.

- For example, consider two scenarios in a web browser

- Tracking a page load - Span

- Denoting when a page becomes interactive - Span Event

- A Span is best used to the first scenario because it’s an operation with a start and an end.

- A Span Event is best used to track the second scenario because it represents a meaningful, singular point in time.

When to use span events versus span attributes

- Since span events also contain attributes, the question of when to use events instead of attributes might not always have an obvious answer.

- To inform your decision, consider whether a specific timestamp is meaningful.

- When you’re tracking an operation with a span and the operation completes, you might want to add data from the operation to your telemetry.

- If the timestamp in which the operation completes is meaningful or relevant, attach the data to a span event.

- If the timestamp isn’t meaningful, attach the data as span attributes.

Span Links

Causal relationship

- Links exist so that you can associate one span with one or more spans, implying a causal relationship.

- For example, let’s say we have a distributed system where some operations are tracked by a trace.

- In response to some of these operations, an additional operation is queued to be executed, but its execution is asynchronous.

- We can track this subsequent operation with a trace as well.

- We would like to associate the trace for the subsequent operations with the first trace

- but we cannot predict when the subsequent operations will start.

- We need to associate these two traces, so we will use a span link.

- You can link the last span from the first trace to the first span in the second trace.

- Now, they are causally associated with one another.

- Links are optional but serve as a good way to associate trace spans with one another.

Span Status

- Each span has a status. The three possible values are - Unset + Error + Ok

- The default value is Unset

- A span status that is Unset means that the operation it tracked successfully completed without an error.

- When a span status is Error, then that means some error occurred in the operation it tracks.

- For example, this could be due to an HTTP 500 error on a server handling a request.

- When a span status is Ok, then that means the span was explicitly marked as error-free by the developer of an application.

- it’s not required to set a span status as Ok when a span is known to have completed without error, as this is covered by Unset.

- What Ok does is represent an unambiguous “final call” on the status of a span that has been explicitly set by a user.

- This is helpful in any situation where a developer wishes for there to be no other interpretation of a span other than “successful”.

- To reiterate

- Unset represents a span that completed without an error.

- Ok represents when a developer explicitly marks a span as successful.

- In most cases, it is not necessary to explicitly mark a span as Ok.

Span Kind

- When a span is created, it is one of Client, Server, Internal, Producer, or Consumer.

- This span kind provides a hint to the tracing backend as to how the trace should be assembled.

- The parent of a server span is often a remote client span, and the child of a client span is usually a server span.

- Similarly, the parent of a consumer span is always a producer and the child of a producer span is always a consumer.

- If not provided, the span kind is assumed to be internal.

Client

- A client span represents a synchronous outgoing remote call such as an outgoing HTTP request or database call.

- Note that in this context, “synchronous” does not refer to async/await, but to the fact that it is not queued for later processing.

Server

- A server span represents a synchronous incoming remote call such as an incoming HTTP request or remote procedure call.

Internal

- Internal spans represent operations which do not cross a process boundary.

- Things like instrumenting a function call or an Express middleware may use internal spans.

Producer

- Producer spans represent the creation of a job which may be asynchronously processed later.

- It may be a remote job such as one inserted into a job queue or a local job handled by an event listener.

Consumer

- Consumer spans represent the processing of a job created by a producer and may start long after the producer span has already ended.

Metrics

A measurement captured at runtime.

- A metric is a measurement of a service captured at runtime.

- The moment of capturing a measurements is known as a metric event

- which consists not only of the measurement itself, but also the time at which it was captured and associated metadata.

- Application and request metrics are important indicators of availability and performance.

- Custom metrics can provide insights into how availability indicators impact user experience or the business.

- Collected data can be used to alert of an outage or trigger scheduling decisions to scale up a deployment automatically upon high demand.

To understand how metrics in OpenTelemetry works, let’s look at a list of components that will play a part in instrumenting our code.

Meter Provider

- A Meter Provider (sometimes called MeterProvider) is a factory for Meters.

- In most applications, a Meter Provider is initialized once and its lifecycle matches the application’s lifecycle.

- Meter Provider initialization also includes Resource and Exporter initialization.

- It is typically the first step in metering with OpenTelemetry.

- In some language SDKs, a global Meter Provider is already initialized for you.

Meter

- A Meter creates metric instruments, capturing measurements about a service at runtime.

- Meters are created from Meter Providers.

Metric Exporter

- Metric Exporters send metric data to a consumer.

- This consumer can be standard output, the OpenTelemetry Collector, or any open source or vendor backend of your choice.

Metric Instruments

- In OpenTelemetry measurements are captured by metric instruments.

- A metric instrument is defined by - Name + Kind + Unit (optional) + Description (optional)

- The name, unit, and description

- are chosen by the developer or defined via semantic conventions for common ones like request and process metrics.

The instrument kind is one of the following:

- Counter

- A value that accumulates over time

- you can think of this like an odometer on a car; it only ever goes up.

- Asynchronous Counter

- Same as the Counter, but is collected once for each export.

- Could be used if you don’t have access to the continuous increments, but only to the aggregated value.

- UpDownCounter

- A value that accumulates over time, but can also go down again.

- An example could be a queue length, it will increase and decrease with the number of work items in the queue.

- Asynchronous UpDownCounter

- Same as the UpDownCounter, but is collected once for each export.

- Could be used if you don’t have access to the continuous changes, but only to the aggregated value (e.g., current queue size).

- Gauge

- Measures a current value at the time it is read.

- An example would be the fuel gauge in a vehicle. Gauges are synchronous.

- Asynchronous Gauge

- Same as the Gauge, but is collected once for each export.

- Could be used if you don’t have access to the continuous changes, but only to the aggregated value.

- Histogram

- A client-side aggregation of values, such as request latencies.

- A histogram is a good choice if you are interested in value statistics.

- For example: How many requests take fewer than 1s?

Aggregation

- An aggregation is a technique whereby a large number of measurements are combined into either exact or estimated statistics about metric events that took place during a time window.

- The OTLP protocol transports such aggregated metrics.

- The OpenTelemetry API provides a default aggregation for each instrument which can be overridden using the Views.

- The OpenTelemetry project aims to provide default aggregations that are supported by visualizers and telemetry backends.

- Unlike request tracing, which is intended to capture request lifecycles and provide context to the individual pieces of a request

- metrics are intended to provide statistical information in aggregate.

Views

- A view provides SDK users with the flexibility to customize the metrics output by the SDK.

- You can customize which metric instruments are to be processed or ignored.

- You can also customize aggregation and what attributes you want to report on metrics.

Language Support

Metrics are a stable signal in the OpenTelemetry specification.

| Language | Metrics |

|---|---|

| C++ | Stable |

| C#/.NET | Stable |

| Go | Stable |

| Java | Stable |

| JavaScript | Stable |

| PHP | Stable |

| Python | Stable |

Logs

A recording of an event.

- A log is a timestamped text record, either structured (recommended) or unstructured, with optional metadata.

- Of all telemetry signals, logs have the biggest legacy.

- Most programming languages have built-in logging capabilities or well-known, widely used logging libraries.

OpenTelemetry logs

- OpenTelemetry does not define a bespoke API or SDK to create logs.

- Instead, OpenTelemetry logs are the existing logs you already have from a logging framework or infrastructure component.

- OpenTelemetry SDKs and autoinstrumentation utilize several components to automatically correlate logs with traces.

- OpenTelemetry’s support for logs is designed to be fully compatible with what you already have

- providing capabilities to wrap those logs with additional context

- and a common toolkit to parse and manipulate logs into a common format across many different sources.

OpenTelemetry logs in the OpenTelemetry Collector

- Several receivers which parse logs from specific, known sources of log data.

- The filelogreceiver, which reads logs from any file and provides features to parse them from different formats or use a regular expression.

- Processors like the transformprocessor which lets you parse nested data, flatten nested structures, add/remove/update values, and more.

- Exporters that let you emit log data in a non-OpenTelemetry format.

- The first step in adopting OpenTelemetry frequently involves deploying a Collector as a general-purposes logging agent.

OpenTelemetry logs for applications

- In applications, OpenTelemetry logs are created with any logging library or built-in logging capabilities.

- When you add autoinstrumentation or activate an SDK

- OpenTelemetry will automatically correlate your existing logs with any active trace and span, wrapping the log body with their IDs.

- In other words, OpenTelemetry automatically correlates your logs and traces.

Language support - Logs are a stable signal in the OpenTelemetry specification.

| Language | Traces | Metrics | Logs |

|---|---|---|---|

| C++ | Stable | Stable | Stable |

| C#/.NET | Stable | Stable | Stable |

| Go | Stable | Stable | Beta |

| Java | Stable | Stable | Stable |

| JavaScript | Stable | Stable | Development |

| PHP | Stable | Stable | Stable |

| Python | Stable | Stable | Development |

Structured + Unstructured + Semistructured

- OpenTelemetry does not technically distinguish between structured and unstructured logs. You can use any log you have with OpenTelemetry.

- However, not all log formats are equally useful!

- Structured logs, in particular, are recommended for production observability because they are easy to parse and analyze at scale.

Structured logs

- A structured log is a log whose textual format follows a consistent, machine-readable format.

- For applications, one of the most common formats is JSON

- For infrastructure components, Common Log Format (CLF) is commonly used

- It is also common to have different structured log formats mixed together.

- For example, an Extended Log Format (ELF) log can mix JSON with the whitespace-separated data in a CLF log.

- Parse both the JSON and the ELF-related pieces into a shared format to make analysis on an observability backend easier.

- The filelogreceiver in the OpenTelemetry Collector contains standardized ways to parse logs like this.

- Structured logs are the preferred way to use logs.

- Because structured logs are emitted in a consistent format, they are straightforward to parse

- makes them easier to preprocess in an OpenTelemetry Collector, correlate with other data, and ultimate analyze in an Observability backend.

Unstructured logs

- Unstructured logs are logs that don’t follow a consistent structure.

- They may be more human-readable, and are often used in development.

- However, it is not preferred to use unstructured logs for production observability purposes

- since they are much more difficult to parse and analyze at scale.

- It is possible to store and analyze Unstructured logs in production

- although you may need to do substantial work to parse or otherwise pre-process them to be machine-readable.

- This will typically be necessary for a logging backend to know how to sort and organize the logs by timestamp.

- Although it’s possible to parse unstructured logs for analysis purposes, doing this may be more work than switching to structured logging

- such as via a standard logging framework in your applications.

Semistructured logs

- A semistructured log is a log that does use some self-consistent patterns to distinguish data so that it’s machine-readable

- but may not use the same formatting and delimiters between data across different systems.

- Although machine-readable, semistructured logs may require several different parsers to allow for analysis at scale.

OpenTelemetry logging components

Log Appender / Bridge

- As an application developer, the Logs Bridge API should not be called by you directly

- as it is provided for logging library authors to build log appenders / bridges.

- Instead, you just use your preferred logging library and configure it to use a log appender (or log bridge)

- that is able to emit logs into an OpenTelemetry LogRecordExporter.

- OpenTelemetry language SDKs offer this functionality.

Logger Provider

Part of the Logs Bridge API and should only be used if you are the author of a logging library.

- A Logger Provider (sometimes called LoggerProvider) is a factory for Loggers.

- In most cases, the Logger Provider is initialized once and its lifecycle matches the application’s lifecycle.

- Logger Provider initialization also includes Resource and Exporter initialization.

Logger

Part of the Logs Bridge API and should only be used if you are the author of a logging library.

- A Logger creates log records. Loggers are created from Log Providers.

Log Record Exporter

- Log Record Exporters send log records to a consumer.

- This consumer can be standard output, the OpenTelemetry Collector, or any open source or vendor backend of your choice.

Log Record

- A log record represents the recording of an event.

- In OpenTelemetry a log record contains two kinds of fields

- Named top-level fields of specific type and meaning

- Resource and attributes fields of arbitrary value and type

The top-level fields are

| Field Name | Description |

|---|---|

| Timestamp | Time when the event occurred. |

| ObservedTimestamp | Time when the event was observed. |

| TraceId | Request trace ID. |

| SpanId | Request span ID. |

| TraceFlags | W3C trace flag. |

| SeverityText | The severity text (also known as log level). |

| SeverityNumber | Numerical value of the severity. |

| Body | The body of the log record. |

| Resource | Describes the source of the log. |

| InstrumentationScope | Describes the scope that emitted the log. |

| Attributes | Additional information about the event. |

Baggage

Contextual information that is passed between signals.

- In OpenTelemetry, Baggage is contextual information that resides next to context.

- Baggage is a key-value store, which means it lets you propagate any data you like alongside context.

- Baggage means you can pass data across services and processes, making it available to add to traces, metrics, or logs in those services.

Example

- Baggage is often used in tracing to propagate additional data across services.

- For example, imagine you have a clientId at the start of a request, but you’d like for that ID to be available on all spans in a trace

- some metrics in another service, and some logs along the way.

- Because the trace may span multiple services

- you need some way to propagate that data without copying the clientId across many places in your codebase.

- By using Context Propagation to pass baggage across these services, the clientId is available to add to any additional spans, metrics, or logs.

- Additionally, instrumentations automatically propagate baggage for you.

What should OTel Baggage be used for?

- Baggage is best used to include information typically available only at the start of a request further downstream.

- This can include things like Account Identification, User IDs, Product IDs, and origin IPs, for example.

- Propagating this information using baggage allows for deeper analysis of telemetry in a backend.

- For example, if you include information like a User ID on a span that tracks a database call

- you can much more easily answer questions like “**which users are experiencing the slowest database calls?**”

- You can also log information about a downstream operation and include that same User ID in the log data.

Baggage security considerations

- Sensitive Baggage items can be shared with unintended resources, like third-party APIs.

- This is because automatic instrumentation includes Baggage in most of your service’s network requests.

- Specifically, Baggage and other parts of trace context are sent in HTTP headers, making it visible to anyone inspecting your network traffic.

- If traffic is restricted within your network, then this risk may not apply

- But keep in mind that downstream services could propagate Baggage outside your network.

- Also, there are no built-in integrity checks to ensure that Baggage items are yours, so exercise caution when reading them.

Baggage is not the same as attributes

unassociated + explicitly - Baggage Span Processors

- An important thing to note about baggage is that it is a separate key-value store

- and is unassociated with attributes on spans, metrics, or logs without explicitly adding them.

- To add baggage entries to attributes, you need to explicitly read the data from baggage and add it as attributes to your spans, metrics, or logs.

- Because a common use cases for Baggage is to add data to Span Attributes across a whole trace

- several languages have Baggage Span Processors that add data from baggage as attributes on span creation.

Instrumentation

How OpenTelemetry facilitates instrumentation

- In order to make a system observable, it must be instrumented

- That is, code from the system’s components must emit traces, metrics, and logs.

- Using OpenTelemetry, you can instrument your code in two primary ways

- Code-based solutions via official APIs and SDKs for most languages

- Zero-code solutions

- Code-based solutions

- allow you to get deeper insight and rich telemetry from your application itself.

- They let you use the OpenTelemetry API to generate telemetry from your application

- which acts as an essential complement to the telemetry generated by zero-code solutions.

- Zero-code solutions

- are great for getting started, or when you can’t modify the application you need to get telemetry out of.

- They provide rich telemetry from libraries you use and/or the environment your application runs in.

- Another way to think of it is that they provide information about what’s happening at the edges of your application.

- You can use both solutions simultaneously.

Additional OpenTelemetry Benefits

- OpenTelemetry provides more than just zero-code and code-based telemetry solutions.

- Libraries can leverage the OpenTelemetry API as a dependency

- which will have no impact on applications using that library, unless the OpenTelemetry SDK is imported.

- For each signal (traces, metrics, logs) you have several methods at your disposals to create, process, and export them.

- With context propagation built into the implementations, you can correlate signals regardless of where they are generated.

- Resources and Instrumentation Scopes allow grouping of signals, by different entities, like, the host, operating system or K8s cluster

- Each language-specific implementation of the API and SDK follows the requirements and expectations of the OpenTelemetry specification.

- Semantic Conventions provide a common naming schema that can be used for standardization across code bases and platforms.

Zero-code

Learn how to add observability to an application without the need to write code - libraries

- As ops you might want to add observability to one or more applications without having to edit the source.

- quickly gain some observability for a service without having to use the OpenTelemetry API & SDK for code-based instrumentation.

- Zero-code instrumentation adds the OpenTelemetry API and SDK capabilities to your application typically as an agent or agent-like installation.

- The specific mechanisms involved may differ by language

- ranging from bytecode manipulation, monkey patching, or eBPF to inject calls to the OpenTelemetry API and SDK into your application.

- Typically, zero-code instrumentation adds instrumentation for the libraries you’re using.

- This means that requests and responses, database calls, message queue calls, and so forth are what are instrumented.

- Your application’s code, however, is not typically instrumented. To instrument your code, you’ll need to use code-based instrumentation.

- Additionally, zero-code instrumentation lets you configure the Instrumentation Libraries and exporters loaded.

- You can configure zero-code instrumentation through environment variables and other language-specific mechanisms

- such as system properties or arguments passed to initialization methods.

- To get started, you only need a service name configured so that you can identify the service in the observability backend of your choice.

- Other configuration options are available, including:

- Data source specific configuration

- Exporter configuration

- Propagator configuration

- Resource configuration

- Automatic instrumentation is available for the following languages:

- .NET

- Go

- Java

- JavaScript

- PHP

- Python

Code-based

Learn the essential steps in setting up code-based instrumentation - application’s code

Import the OpenTelemetry API and SDK

- You’ll first need to import OpenTelemetry to your service code.

- If you’re developing a library or some other component that is intended to be consumed by a runnable binary

- then you would only take a dependency on the API.

- If your artifact is a standalone process or service, then you would take a dependency on the API and the SDK.

| Type | Dependency |

|---|---|

| library | API |

| standalone process or service | API + SDK |

Configure the OpenTelemetry API

- In order to create traces or metrics, you’ll need to first create a tracer and/or meter provider.

- In general, we recommend that the SDK should provide a single default provider for these objects. - factory

- You’ll then get a tracer or meter instance from that provider, and give it a name and version.

- The name you choose here should identify what exactly is being instrumented

- if you’re writing a library, then you should name it after your library as this name will namespace all spans or metric events produced.

- It is also recommended that you supply a version string (i.e., semver:1.0.0) that corresponds to the current version of your library or service.

Configure the OpenTelemetry SDK

- If you’re building a service process

- you’ll also need to configure the SDK with appropriate options for exporting your telemetry data to some analysis backend.

- We recommend that this configuration be handled programmatically through a configuration file or some other mechanism.

- There are also per-language tuning options you may wish to take advantage of.

Create Telemetry Data

- Once you’ve configured the API and SDK

- you’ll then be free to create traces and metric events through the tracer and meter objects you obtained from the provider.

- Make use of Instrumentation Libraries for your dependencies - https://opentelemetry.io/ecosystem/registry/

Export Data

- Once you’ve created telemetry data, you’ll want to send it somewhere.

- OpenTelemetry supports two primary methods of exporting data from your process to an analysis backend

- either directly from a process or by proxying it through the OpenTelemetry Collector.

- In-process export requires you to import and take a dependency on one or more exporters

- libraries that translate OpenTelemetry’s in-memory span and metric objects into the appropriate format for telemetry analysis tools

- In addition, OpenTelemetry supports a wire protocol known as OTLP, which is supported by all OpenTelemetry SDKs.

- This protocol can be used to send data to the OpenTelemetry Collector

- Collector - a standalone binary process that can be run as a proxy or sidecar to your service instances or run on a separate host.

- The Collector can then be configured to forward and export this data to your choice of analysis tools.

Libraries

Learn how to add native instrumentation to your library.

- OpenTelemetry provides instrumentation libraries for many libraries, which is typically done through library hooks or monkey-patching library code.

- Native library instrumentation with OpenTelemetry provides better observability and developer experience for users

- removing the need for libraries to expose and document hooks.

- Other advantages provided by native instrumentation include:

- Custom logging hooks can be replaced by common and easy to use OpenTelemetry APIs, users will only interact with OpenTelemetry.

- Traces, logs, metrics from library and application code are correlated and coherent.

- Common conventions allow users to get similar and consistent telemetry within same technology and across libraries and languages.

- Telemetry signals can be fine tuned (filtered, processed, aggregated) for various consumption scenarios

- using a wide variety of well-documented OpenTelemetry extensibility points.

Semantic conventions

- Semantic conventions are the main source of truth about what information is included on spans produced by

- web frameworks, RPC clients, databases, messaging clients, infrastructure, and more.

- Conventions make instrumentation consistent

- users who work with telemetry don’t have to learn library specifics

- observability vendors can build experiences for a wide variety of technologies, for example databases or messaging systems.

- When libraries follow conventions, many scenarios can be enabled without the user’s input or configuration.

- Semantic conventions are always evolving and new conventions are constantly added.

- Pay special attention to span names: strive to use meaningful names and consider cardinality when defining them.

- Also set the schema_url attribute that you can use to record what version of the semantic conventions you’re using.

Defining spans

- From the perspective of a library user and what the user might be interested in knowing about the behavior and activity of the library.

- As the library maintainer, you know the internals

- but the user will most likely be less interested in the inner workings of the library and more interested in the functionality of their application.

- Think about what information can be helpful in analyzing the usage of your library, then think about an appropriate way to model that data.

- Some aspects to consider include

- Spans and span hierarchies

- Numerical attributes on spans, as an alternative to aggregated metrics

- Span events

- Aggregated Metrics

- For example, if your library is making requests to a database, create spans only for the logical request to the database.

- The physical requests over the network should be instrumented within the libraries implementing that functionality.

- You should also favor capturing other activities, like object/data serialization as span events, rather than as additional spans.

- Follow the semantic conventions when setting span attributes.

When not to instrument

only instrument your library at its own level + When in doubt, don’t instrument

- Some libraries are thin clients wrapping network calls.

- Chances are that OpenTelemetry has an instrumentation library for the underlying RPC client.

- https://opentelemetry.io/ecosystem/registry/

- If a library exists, instrumenting the wrapper library might not be necessary.

- As a general guideline, only instrument your library at its own level.

- Don’t instrument if all the following cases apply

- Your library is a thin proxy on top of documented or self-explanatory APIs.

- OpenTelemetry has instrumentation for underlying network calls.

- There are no conventions your library should follow to enrich telemetry.

- When in doubt, don’t instrument.

- If you choose not to instrument, still be useful to provide a way to configure OpenTelemetry handlers for your internal RPC client instance.

- It’s essential in languages that don’t support fully automatic instrumentation and still useful in others.

OpenTelemetry API

- The first step when instrumenting an application is to include the OpenTelemetry API package as a dependency.

- OpenTelemetry has two main modules: API and SDK.

- OpenTelemetry API is a set of abstractions and non-operational implementations.

- Unless your application imports the OpenTelemetry SDK

- your instrumentation does nothing and does not impact application performance.

Libraries should only use the OpenTelemetry API

If you’re concerned about adding new dependencies, here are some considerations to help you decide how to minimize dependency conflicts

- OpenTelemetry Trace API reached stability in early 2021. It follows Semantic Versioning 2.0.

- Use the earliest stable OpenTelemetry API (1.0.*) and avoid updating it unless you have to use new features.

- While your instrumentation stabilizes, consider shipping it as a separate package, so that it never causes issues for users who don’t use it.

- Semantic conventions are stable, but subject to evolution

- while this does not cause any functional issues, you might need to update your instrumentation every once in a while.

- Having it in a preview plugin or in OpenTelemetry contrib repository

- may help keeping conventions up-to-date without breaking changes for your users.

Getting a tracer

- All application configuration is hidden from your library through the Tracer API.

- Libraries might allow applications to pass instances of TracerProvider to facilitate dependency injection and ease of testing

- or obtain it from global TracerProvider.

- OpenTelemetry language implementations might have different preferences for passing instances or accessing the global

- based on what’s idiomatic in each programming language.

- When obtaining the tracer, provide your library (or tracing plugin) name and version

- they show up on the telemetry and help users process and filter telemetry

- understand where it came from, and debug or report instrumentation issues.

What to instrument

Public APIs

- Public APIs are good candidates for tracing

- spans created for public API calls allow users to map telemetry to application code, understand the duration and outcome of library calls.

- Which calls to trace include

- Public methods that make network calls internally or local operations that take significant time and may fail, for example I/O.

- Handlers that process requests or messages.

- Follow conventions to populate attributes.

The following example shows how to instrument a Java application:

1 | private static Tracer tracer = getTracer(TracerProvider.noop()); |

Nested network and other spans

- Network calls are usually traced with OpenTelemetry auto-instrumentations through corresponding client implementation.

- If OpenTelemetry does not support tracing your network client, here are some considerations to help you decide the best course of action:

- Would tracing network calls improve observability for users or your ability to support them?

- Is your library a wrapper on top of public, documented RPC API?

- Would users need to get support from the underlying service in case of issues?

- Instrument the library and make sure to trace individual network tries.

- Would tracing those calls with spans be very verbose? or would it noticeably impact performance?

- Use logs with verbosity or span events

- logs can be correlated to parent (public API calls)

- while span events should be set on public API span.

- If they have to be spans (to carry and propagate unique trace context)

- put them behind a configuration option and disable them by default.

- Use logs with verbosity or span events

- If OpenTelemetry already supports tracing your network calls, you probably don’t want to duplicate it. There might be some exceptions:

- To support users without auto-instrumentation

- which might not work in certain environments or when users have concerns with monkey-patching.

- To enable custom or legacy correlation and context propagation protocols with underlying service.

- Enrich RPC spans with essential library or service-specific information not covered by auto-instrumentation.

- To support users without auto-instrumentation

A generic solution to avoid duplication is under construction.

Events

- Traces are a kind of signal that your apps can emit. Events (or logs) and traces complement, not duplicate, each other.

- Whenever you have something that should have a certain level of verbosity, logs are a better choice than traces.

- If your app uses logging or some similar module, the logging module might already have OpenTelemetry integration.

- To find out, see the registry. - https://opentelemetry.io/ecosystem/registry/

- Integrations usually stamp active trace context on all logs, so users can correlate them.

- If your language and ecosystem don’t have common logging support, use span events to share additional app details.

- Events maybe more convenient if you want to add attributes as well.

- As a rule of thumb, use events or logs for verbose data instead of spans.

- Always attach events to the span instance that your instrumentation created.

- Avoid using the active span if you can, since you don’t control what it refers to.

Context propagation

Extracting context

- If you work on a library or a service that receives upstream calls

- such as a web framework or a messaging consumer,extract context from the incoming request or message.

- OpenTelemetry provides the Propagator API, which hides specific propagation standards and reads the trace Context from the wire.

- In case of a single response, there is just one context on the wire, which becomes the parent of the new span the library creates.

- After you create a span, pass new trace context to the application code (callback or handler), by making the span active;

- if possible, do this explicitly.

- In the case of a messaging system, you might receive more than one message at once.

- Received messages become links on the span you create.

The following Java example shows how to add trace context and activate a span.

1 | // extract the context |

Injecting context

- When you make an outbound call, you usually want to propagate context to the downstream service.

- In this case, create a new span to trace the outgoing call and use Propagator API to inject context into the message.

- There might be other cases where you might want to inject context, for example when creating messages for async processing.

The following Java example shows how to propagate context.

1 | Span span = tracer.spanBuilder("send") |

There might be some exceptions where you don’t need to propagate context

- Downstream service does not support metadata or prohibits unknown fields.

- Downstream service does not define correlation protocols.

- Consider adding support for context propagation in a future version.

- Downstream service supports custom correlation protocol.

- Best effort with custom propagator

- use OpenTelemetry trace context if compatible or generate and stamp custom correlation IDs on the span.

In-process

- Make your spans active or current, as this enables correlating spans with logs and any nested auto-instrumentations.

- If the library has a notion of context, support optional explicit trace context propagation in addition to active spans. - Go

- Put spans (trace context) created by library in the context explicitly, document how to access it.

- Allow users to pass trace context in your context.

- Within the library, propagate trace context explicitly. Active spans might change during callbacks.

- Capture active context from users on the public API surface as soon as you can, use it as a parent context for your spans.

- Pass context around and stamp attributes, exceptions, events on explicitly propagated instances.

- This is essential if you start threads explicitly

- do background processing or other things that can break due to async context flow limitations in your language. - Java

Additional considerations

Instrumentation registry

- Add your instrumentation library to the OpenTelemetry registry so users can find it.

Performance

- OpenTelemetry API is no-op and very performant when there is no SDK in the application.

- When OpenTelemetry SDK is configured, it consumes bound resources.

- Real-life applications, especially on the high scale, would frequently have head-based sampling configured.

- Sampled-out spans are affordable

- and you can check if the span is recording to avoid extra allocations and potentially expensive calculations while populating attributes.

- The following Java example shows to provide attributes for sampling and check span recording.

1 | // some attributes are important for sampling, they should be provided at creation time |

Error handling

- OpenTelemetry API does not fail on invalid arguments, never throws, and swallows exceptions, which means it’s forgiving at runtime.

- This way instrumentation issues do not affect application logic. Test the instrumentation to notice issues OpenTelemetry hides at runtime.

Testing

- Since OpenTelemetry has a variety of auto-instrumentations, try how your instrumentation interacts with other telemetry

- incoming requests, outgoing requests, logs, and so on.

- Use a typical application, with popular frameworks and libraries and all tracing enabled when trying out your instrumentation.

- For unit testing, you can usually mock or fake SpanProcessor and SpanExporter as in the following Java example:

1 |

|

组件

OpenTelemetry 让你无需使用特定供应商的 SDK 和工具就能生成和导出遥测数据 - 避免厂商锁定

规范

本节说明了针对所有实现的跨语言要求和期望。除了术语定义之外,规范还定义了以下内容

| Component | Desc |

|---|---|

| API | 定义了用于生成和关联跟踪、指标和日志数据的数据类型和操作 |

| SDK | 定义了 API 特定语言实现的要求 - 配置、数据处理和导出概念也在这里定义 |

| 数据 | 定义了 OpenTelemetry 协议(OTLP)和与供应商无关的、遥测后端可以提供支持的语义约定 |

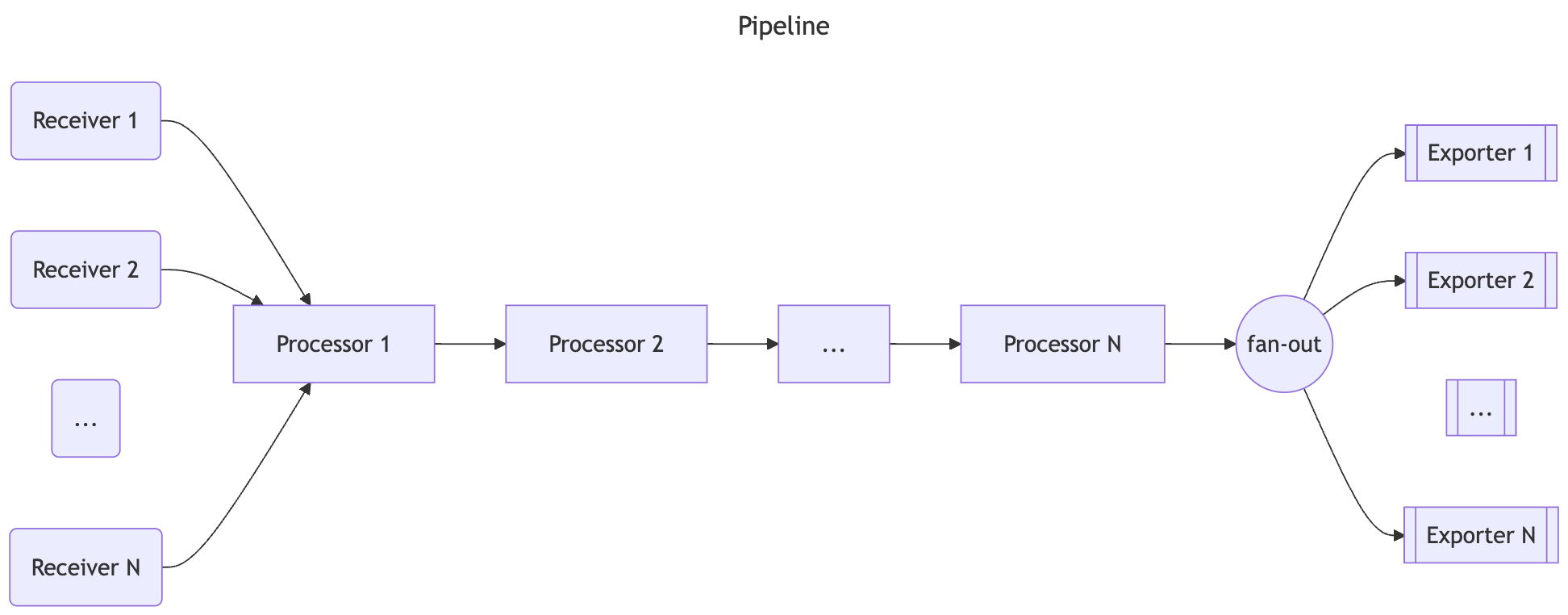

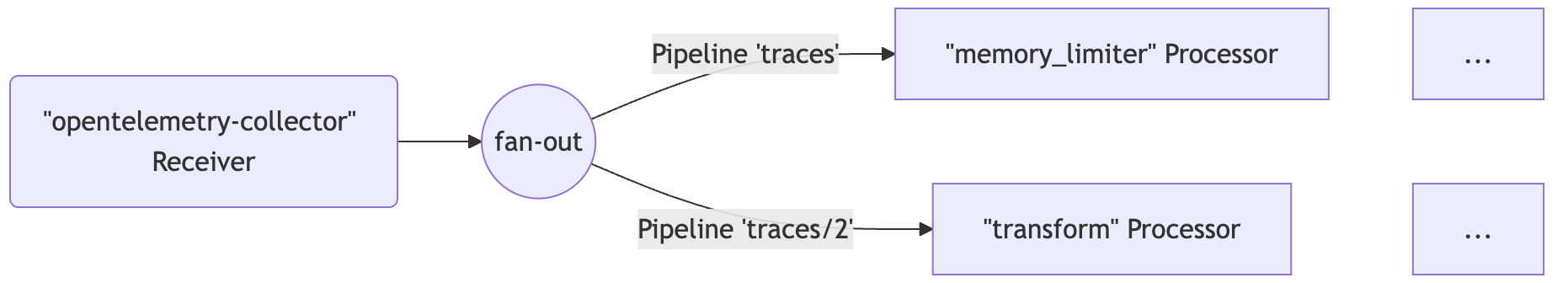

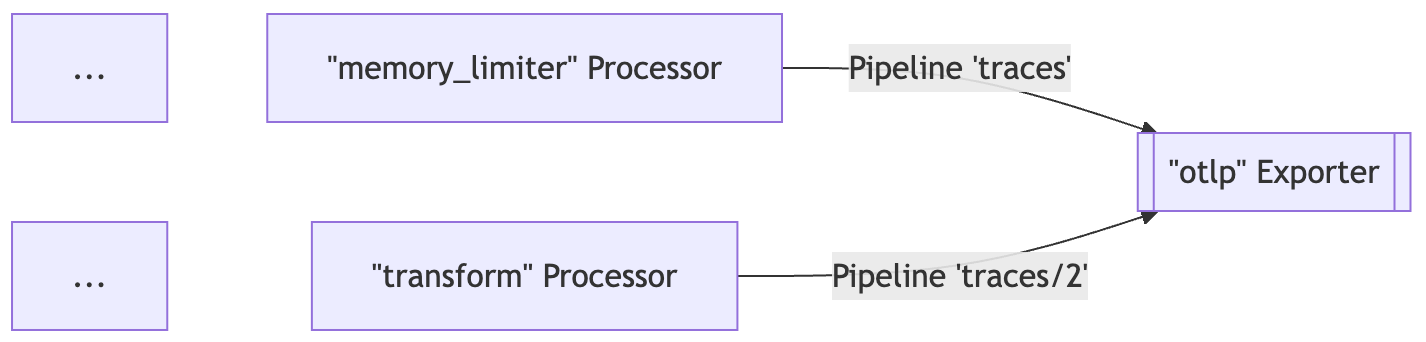

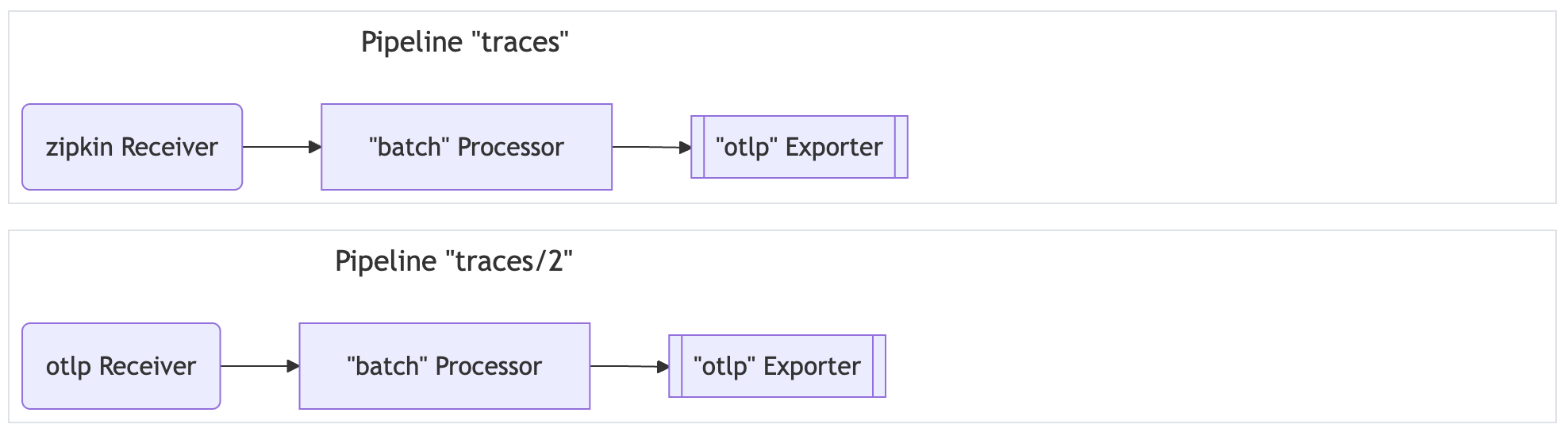

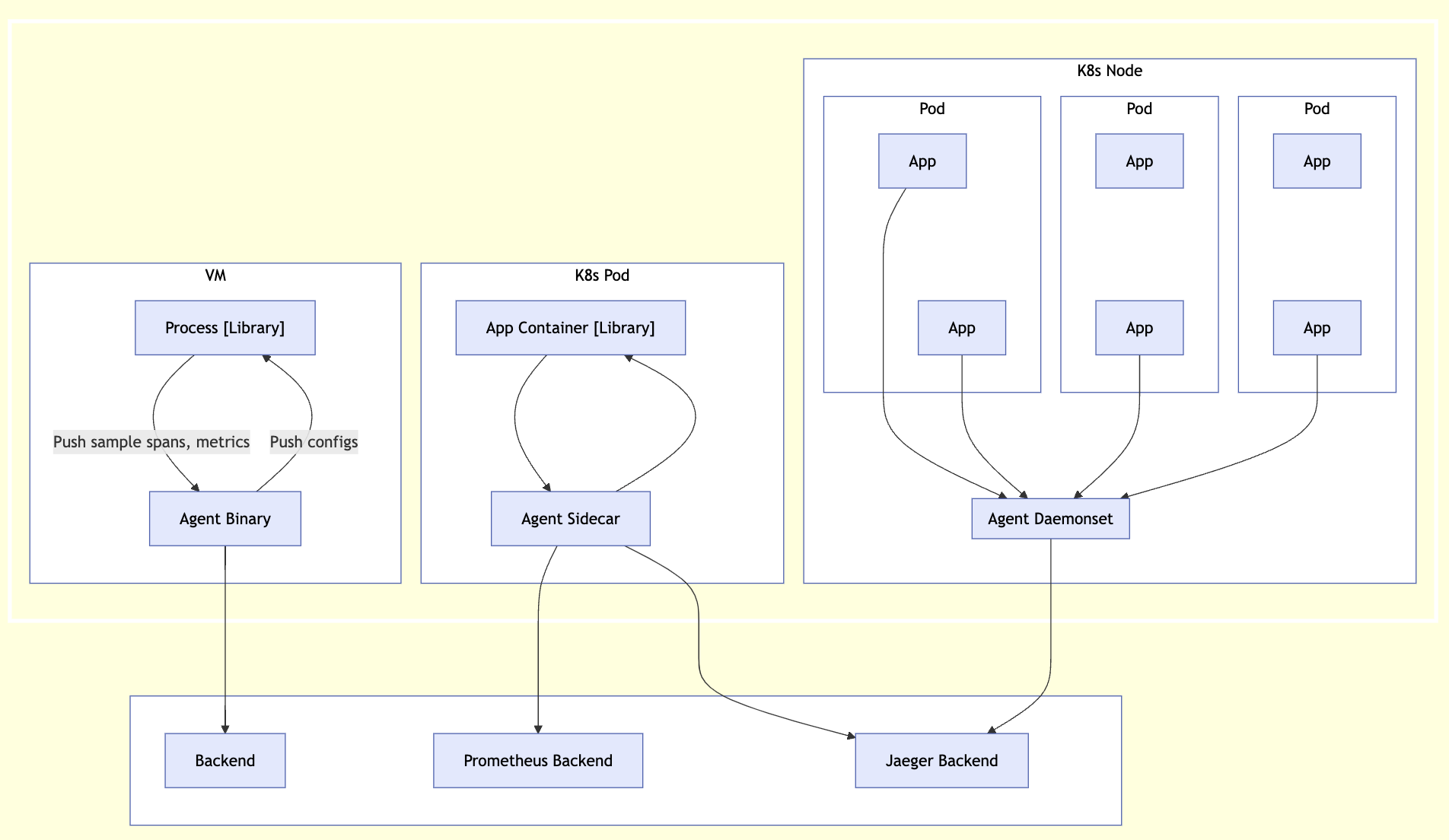

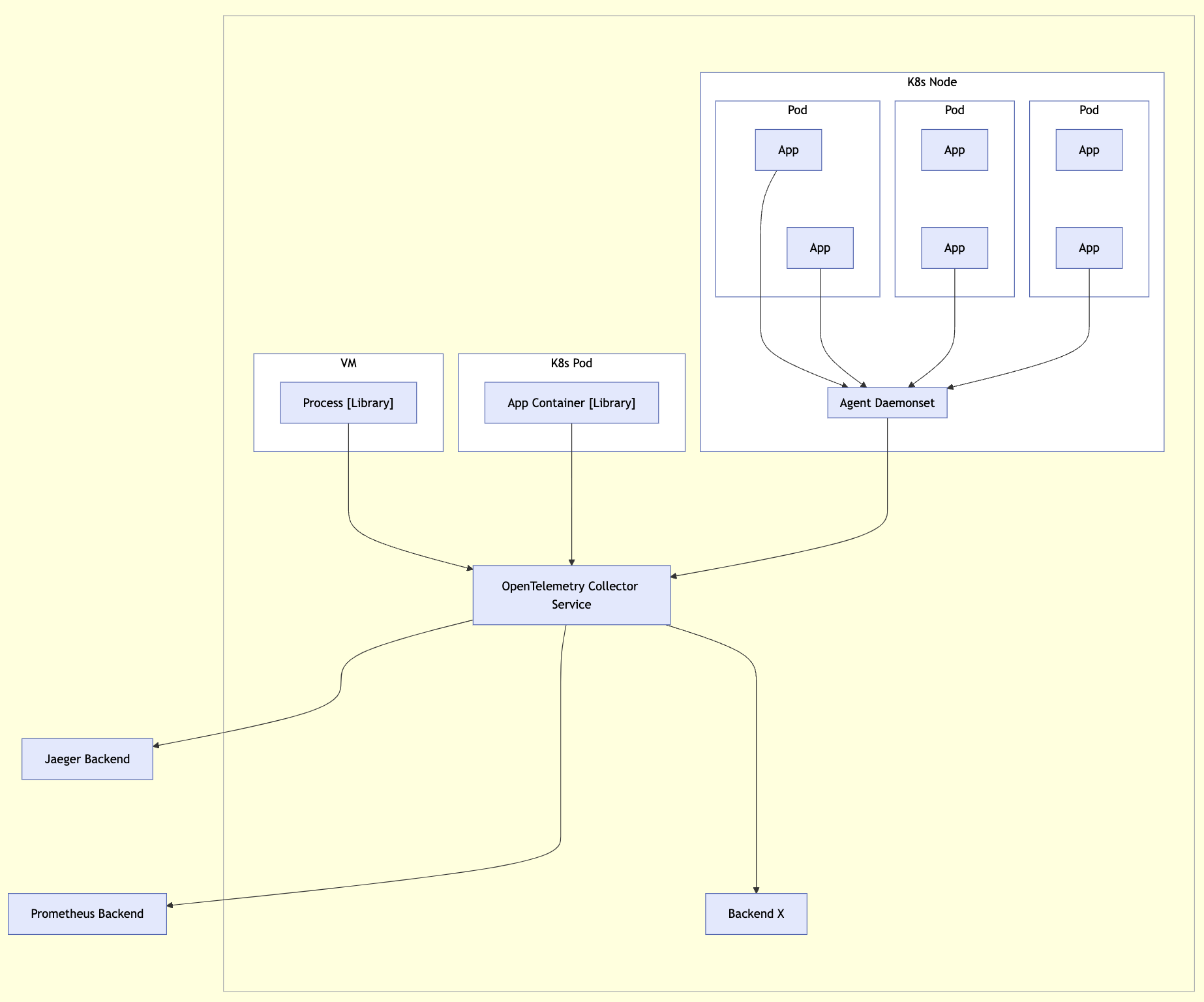

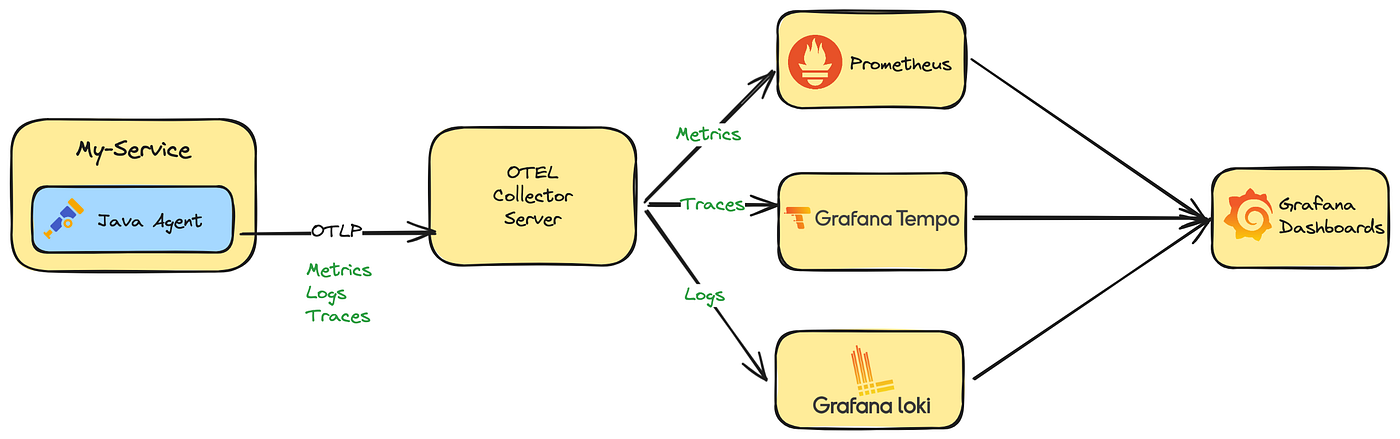

Collector

- OpenTelemetry Collector 是一个与供应商无关的代理,可以接收、处理和导出遥测数据

- 支持以多种格式接收遥测数据(例如 OTLP、Jaeger、Prometheus 以及许多商业/专有工具)并将数据发送到一个或多个后端

- 支持在导出之前处理和过滤遥测数据

API + SDK

- OpenTelemetry 还提供语言 SDK,允许你使用所选语言的 OpenTelemetry API 生成遥测数据,并将这些数据导出到首选后端

- 这些 SDK 还允许你结合常见库和框架的插桩库,以便你可以将其用于应用程序中的手动插桩

插桩库

- OpenTelemetry 支持通过大量组件来为所支持的语言根据流行的库和框架生成相关遥测数据

- 来自 HTTP 库的入站和出站 HTTP 请求将生成有关这些请求的数据

- 让流行的库能够开箱即用地进行观测而无需拉入独立的组件中是一个长期目标

导出器

- Send telemetry to the OpenTelemetry Collector to make sure it’s exported correctly.

- Using the Collector in production environments is a best practice.

- To visualize your telemetry, export it to a backend such as Jaeger, Zipkin, Prometheus, or a vendor-specific backend.

- Among exporters, OTLP exporters are designed with the OpenTelemetry data model in mind, emitting OTel data without any loss of information.

- many tools that operate on telemetry data support OTLP (such as Prometheus, Jaeger, and most vendors)

- providing you with a high degree of flexibility when you need it.

零代码插桩

- 如果适用,OpenTelemetry 的特定语言实现将提供一种无需修改源代码即可对应用程序进行插桩的方法

- 虽然底层机制取决于使用的语言,但至少会将 OpenTelemetry API 和 SDK 能力添加到你的应用程序中

- 此外,它们还可能添加一组插桩库和导出器依赖项

资源检测器

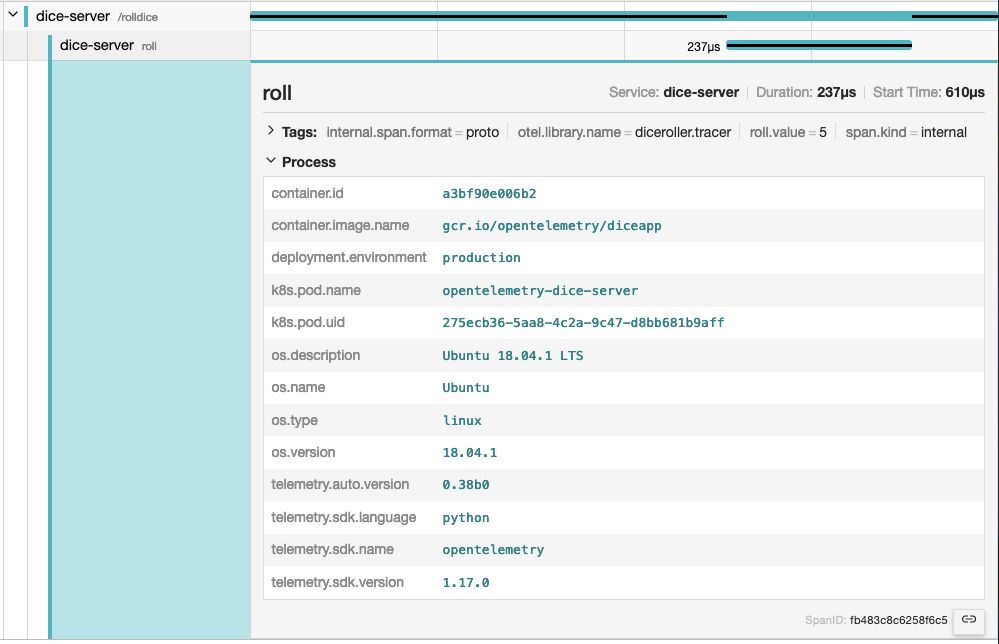

- 资源以资源属性表示生成遥测数据的实体

- 在 Kubernetes 上运行的容器中生成遥测数据的进程具有 Pod 名称、命名空间和可能的 Deployment 名称 - 这三个属性都可以包含在资源中

- 特定语言实现提供了从环境变量 OTEL_RESOURCE_ATTRIBUTES 和许多常见实体(如进程运行时、服务、主机或操作系统)中检测资源的功能

跨服务传播器

- 传播是一种用于跨服务和进程边界传递信息的机制

- 虽然不限于跟踪,但它是允许跟踪在跨越进程和网络边界的服务中建立系统因果关系的信息

- 对于绝大多数场景,上下文传播是通过插桩库为你完成的

- 但如果需要,你可以自己使用 Propagators 来序列化和反序列化跨领域的关注点, 例如 span 的上下文和 baggage

采样器

- 采样是限制系统生成跟踪数量的过程,特定语言的实现提供了几种头部采样器

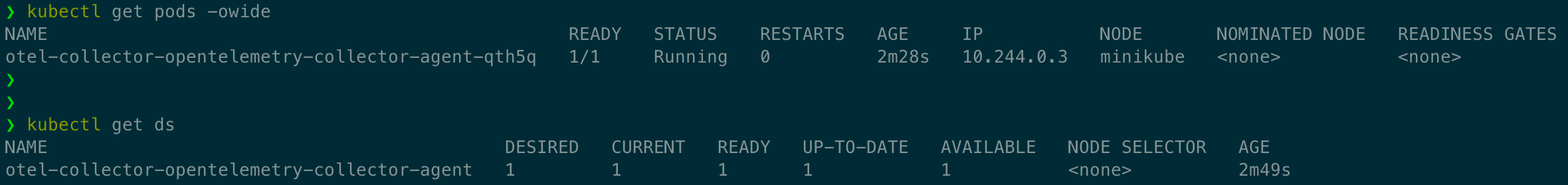

K8s Operator

- OpenTelemetry Operator 是 Kubernetes Operator 的一种实现

- Operator 管理 OpenTelemetry Collector 以及使用 OpenTelemetry 对工作负载进行自动插桩

函数即服务资产

- OpenTelemetry 支持多种由不同云服务商提供的函数即服务的监控方法

- 社区目前提供预构建的 Lambda 层,能够自动对你的应用进行插桩, 另外在手动或自动对应用进行插桩时可以使用的独立 Collector Lambda 层选项

语义约定

- OpenTelemetry 定义了语义约定, 为不同类型的操作和数据指定通用名称

- 使用语义约定的好处是遵循通用的命名方案,可以在代码库、库和平台之间实现标准化

- 语义约定适用于链路追踪、指标、日志和资源

Resources

Introduction

- A resource represents the entity producing telemetry as resource attributes.

- For example, a process producing telemetry that is running in a container on Kubernetes

- has a process name, a pod name, a namespace, and possibly a deployment name.

- All four of these attributes can be included in the resource.

- In your observability backend, you can use resource information to better investigate interesting behavior.

- if your trace or metrics data indicate latency in your system, you can narrow it down to a specific container, pod, or Kubernetes deployment.

- A resource is added to the TraceProvider or MetricProvider when they are created during initialization. This association cannot be changed later.

- After a resource is added, all spans and metrics produced from a Tracer or Meter from the provider will have the resource associated with them.

If you use Jaeger as your observability backend, resource attributes are grouped under the Process tab

Semantic Attributes with SDK-provided Default Value

- There are attributes provided by the OpenTelemetry SDK.

- One of them is the service.name, which represents the logical name of the service.

- By default, SDKs will assign the value unknown_service for this value

- so it is recommended to set it explicitly, either in code or via setting the environment variable OTEL_SERVICE_NAME.

- Additionally, the SDK will also provides the following resource attributes to identify itself

- telemetry.sdk.name

- telemetry.sdk.language

- telemetry.sdk.version

Resource Detectors

- Most language-specific SDKs provide a set of resource detectors that can be used to automatically detect resource information from the environment.

- Common resource detectors include

- Operating System

- Host

- Process and Process Runtime

- Container

- Kubernetes

- Cloud-Provider-Specific Attributes

Custom resources

- You can also provide your own resource attributes.

- You can either provide them in code or via populating the environment variable OTEL_RESOURCE_ATTRIBUTES.

- If applicable, use the semantic conventions for your resource attributes.

- For example, you can provide the name of your deployment environment using deployment.environment

1 | env OTEL_RESOURCE_ATTRIBUTES=deployment.environment=production yourApp |

Instrumentation scope

- The Instrumentation scope represents a logical unit within the application code with which the emitted telemetry can be associated.

- Developers can decide what denotes a reasonable instrumentation scope.

- For example, they can select a module, a package, or a class as the instrumentation scope.

- In the case of a library or framework, a common approach is to use an identifier as scope that is unique to the library or framework

- such as a fully qualified name and version of the library or framework.

- If the library itself doesn’t have built-in OpenTelemetry instrumentation, and an instrumentation library is used instead

- use the name and version of the instrumentation library as the instrumentation scope.

- The instrumentation scope is defined by a name and version pair when a tracer, meter or logger instance is obtained from a provider.

- Each span, metric, or log record created by the instance is then associated with the provided instrumentation scope.

- In your observability backend, scoping allows you to slice and dice your telemetry data by scope

- for example to see which of your users are using which version of a library

- what the performance of that library version is

- to pin point a problem to a specific module of your application.

- The following diagram illustrates a trace with multiple instrumentation scopes. The different scopes are represented by different colors

- At the top the /api/placeOrder span is generated by the used HTTP framework.

- The spans in green (CheckoutService::placeOrder, prepareOrderItems and checkout) are application code, grouped by the CheckoutService class.

- The spans for CartService::getCart and ProductService::getProduct are also application code

- grouped by the CartService and ProductService classes.

- The spans in orange (Cache::find) and light blue (DB::query) are library code, grouped by the library name and version.

Sampling

Learn about sampling and the different sampling options available in OpenTelemetry.

- With traces, you can observe requests as they move from one service to another in a distributed system.

- Tracing is highly practical for both high-level and in-depth analysis of systems.

- However, if the large majority of your requests are successful and finish with acceptable latency and no errors

- you do not need 100% of your traces to meaningfully observe your applications and systems. You just need the right sampling.

Terminology

- It’s important to use consistent terminology when discussing sampling.

- A trace or span is considered “sampled” or “not sampled”

- Sampled

- A trace or span is processed and exported.

- Because it is chosen by the sampler as a representative of the population, it is considered “sampled”.

- Not sampled

- A trace or span is not processed or exported.

- Because it is not chosen by the sampler, it is considered “not sampled”.

- Sampled

Why sampling?

- Sampling is one of the most effective ways to reduce the costs of observability without losing visibility.

- Although there are other ways to lower costs, such as filtering or aggregating data

- these other methods do not adhere to the concept of representativeness

- which is crucial when performing in-depth analysis of application or system behavior.

- Representativeness is the principle that a smaller group can accurately represent a larger group.

- Additionally, representativeness can be mathematically verified

- meaning that you can have high confidence that a smaller sample of data accurately represents the larger group.

- Additionally, the more data you generate, the less data you actually need to have a representative sample.

- For high-volume systems, is quite common for a sampling rate of 1% or lower to very accurately represent the other 99% of data.

When to sample

- Consider sampling if you meet any of the following criteria:

- You generate 1000 or more traces per second.

- Most of your trace data represents healthy traffic with little variation in data.

- You have some common criteria, like errors or high latency, that usually means something is wrong.

- You have domain-specific criteria you can use to determine relevant data beyond errors and latency.

- You can describe some common rules that determine if data should be sampled or dropped.

- You have a way to tell your services apart, so that high- and low-volume services are sampled differently.

- You have the ability to route unsampled data (for “just in case” scenarios) to low-cost storage systems.

- Finally, consider your overall budget.

- If you have limited budget for observability, but can afford to spend time to effectively sample, then sampling can generally be worth it.

When not to sample

- Sampling might not be appropriate for you. You might want to avoid sampling if you meet any of the following criteria:

- You generate very little data (tens of small traces per second or lower).

- You only use observability data in aggregate, and can thus pre-aggregate data.

- You are bound by circumstances such as regulation that prohibit dropping data (and cannot route unsampled data to low-cost storage).

- Finally, consider the following three costs associated with sampling:

- The direct cost of compute to effectively sample data, such as a tail sampling proxy.

- The indirect engineering cost of maintaining effective sampling methodologies as more application, systems, and data are involved.

- The indirect opportunity cost of missing critical information with ineffective sampling techniques.

- Sampling, while effective at reducing observability costs, might introduce other unexpected costs if not performed well.

- It could be cheaper to allocate more resources for observability instead, either with a vendor or compute when self-hosting

- depending on your observability backend, the nature of your data, and your attempts to sample effectively.

Head Sampling

- Head sampling is a sampling technique used to make a sampling decision as early as possible.

- A decision to sample or drop a span or trace is not made by inspecting the trace as a whole.

- For example, the most common form of head sampling is Consistent Probability Sampling.

- This is also be referred to as Deterministic Sampling.

- In this case, a sampling decision is made based on the trace ID and the desired percentage of traces to sample.

- This ensures that whole traces are sampled - no missing spans - at a consistent rate, such as 5% of all traces

- The upsides to head sampling are:

- Easy to understand

- Easy to configure

- Efficient

- Can be done at any point in the trace collection pipeline

- The primary downside to head sampling is that it is not possible to make a sampling decision based on data in the entire trace.

- For example, you cannot ensure that all traces with an error within them are sampled with head sampling alone.

- For this situation and many others, you need tail sampling.

Tail Sampling

- Tail sampling is where the decision to sample a trace takes place by considering all or most of the spans within the trace.

- Tail Sampling gives you the option to sample your traces based on specific criteria derived from different parts of a trace

- which isn’t an option with Head Sampling.

- Some examples of how you can use Tail Sampling include:

- Always sampling traces that contain an error

- Sampling traces based on overall latency

- Sampling traces based on the presence or value of specific attributes on one or more spans in a trace

- for example, sampling more traces originating from a newly deployed service

- Applying different sampling rates to traces based on certain criteria

- such as when traces only come from low-volume services versus traces with high-volume services.

- As you can see, tail sampling allows for a much higher degree of sophistication in how you sample data.

- For larger systems that must sample telemetry

- it is almost always necessary to use Tail Sampling to balance data volume with the usefulness of that data.

- There are three primary downsides to tail sampling today:

- Tail sampling can be difficult to implement.

- Depending on the kind of sampling techniques available to you, it is not always a “set and forget” kind of thing.

- As your systems change, so too will your sampling strategies.

- For a large and sophisticated distributed system, rules that implement sampling strategies can also be large and sophisticated.

- Tail sampling can be difficult to operate.

- The component(s) that implement tail sampling must be stateful systems that can accept and store a large amount of data.

- Depending on traffic patterns, this can require dozens or even hundreds of compute nodes that all utilize resources differently.

- Furthermore, a tail sampler might need to “fall back” to less computationally intensive sampling techniques

- if it is unable to keep up with the volume of data it is receiving.

- it is critical to monitor tail-sampling components to ensure that they have the resources they need to make the correct sampling decisions.

- Tail samplers often end up as vendor-specific technology today.

- If you’re using a paid vendor for Observability

- the most effective tail sampling options available to you might be limited to what the vendor offers.

- Tail sampling can be difficult to implement.

- Finally, for some systems, tail sampling might be used in conjunction with Head Sampling.

- a set of services that produce an extremely high volume of trace data might first use head sampling to sample only a small percentage of traces

- and then later in the telemetry pipeline use tail sampling to make more sophisticated sampling decisions before exporting to a backend.

- This is often done in the interest of protecting the telemetry pipeline from being overloaded.

Support

Collector

The OpenTelemetry Collector includes the following sampling processors:

- Probabilistic Sampling Processor

- Tail Sampling Processor

Language SDKs

- https://opentelemetry.io/zh/docs/languages/go/sampling/

- https://opentelemetry.io/zh/docs/languages/js/sampling/

Vendors

- Many vendors offer comprehensive sampling solutions

- that incorporate head sampling, tail sampling, and other features that can support sophisticated sampling needs.

- These solutions may also be optimized specifically for the vendor’s backend.

- If you are sending telemetry to a vendor, consider using their sampling solutions.

Distributions

A distribution, not to be confused with a fork, is customized version of an OpenTelemetry component.

- The OpenTelemetry projects consists of multiple components that support multiple signals.

- Any reference implementation can be customized as a distribution.

What is a distribution?

- A distribution is a customized version of an OpenTelemetry component.

- A distribution is a wrapper around an upstream OpenTelemetry repository with some customizations.

- Distributions are not to be confused with forks.

- Customizations in a distribution may include:

- Scripts to ease use or customize use for a specific backend or vendor

- Changes to default settings required for a backend, vendor, or end-user

- Additional packaging options that may be vendor or end-user specific

- Test, performance, and security coverage beyond what OpenTelemetry provides

- Additional capabilities beyond what OpenTelemetry provides

- Less capabilities from what OpenTelemetry provides

- Distributions broadly fall into the following categories:

- Pure

- These distributions provide the same functionality as upstream and are 100% compatible.

- Customizations typically enhance the ease of use or packaging.

- These customizations may be backend, vendor, or end-user specific.

- Plus

- These distributions provide added functionalities on top of upstream through additional components.

- Examples include instrumentation libraries or vendor exporters not upstreamed to the OpenTelemetry project.

- Minus

- These distributions provide a subset of functionality from upstream.

- Examples of this include the removal of

- instrumentation libraries

- receivers, processors, exporters, or extensions found in the OpenTelemetry Collector project.

- These distributions may be provided to increase supportability and security considerations.

- Pure

Who can create a distribution?

- Anyone can create a distribution. Today, several vendors offer distributions.

- end-users can consider creating a distribution

- if they want to use components in the Registry that are not upstreamed to the OpenTelemetry project.

Contribution or distribution?

- Before you read on and learn how you can create your own distribution

- ask yourself if your additions on top of an OpenTelemetry component would be beneficial for everyone

- and therefore should be included in the reference implementations:

- Can your scripts for “ease of use” be generalized?

- Can your changes to default settings be the better option for everyone?

- Are your additional packaging options really specific?

- Might your test, performance and security coverage work with the reference implementation as well?

- Have you checked with the community if your additional capabilities could be part of the standard?

Glossary

Definitions and conventions for telemetry terms as used in OpenTelemetry.

- This glossary defines terms and concepts that are new to the OpenTelemetry project

- and clarifies OpenTelemetry-specific uses of terms common in the observability field.

Aggregation

- The process of combining multiple measurements into exact or estimated statistics about the measurements

- that took place during an interval of time, during program execution.

- Used by the Metric Data source.

API

- Application Programming Interface.

- In the OpenTelemetry project, used to define how telemetry data is generated per Data source.

Application

- One or more Services designed for end users or other applications.

APM

- Application Performance Monitoring is about monitoring software applications

- their performance (speed, reliability, availability, and so on) to detect issues, alerting and tooling for finding the root cause.

Attribute

- OpenTelemetry term for Metadata.

- Adds key-value information to the entity producing telemetry.

- Used across Signals and Resources

Automatic instrumentation

- Refers to telemetry collection methods that do not require the end-user to modify application’s source code.

- Methods vary by programming language, and examples include bytecode injection or monkey patching.

Baggage

- A mechanism for propagating Metadata to help establish a causal relationship between events and services.

Client library

- See Instrumented library.

Client-side app

- A component of an Application that is not running inside a private infrastructure and is typically used directly by end-users.

- Examples of client-side apps are browser apps, mobile apps, and apps running on IoT devices.

Collector

- The OpenTelemetry Collector, or Collector for short, is a vendor-agnostic implementation on how to receive, process, and export telemetry data.

- A single binary that can be deployed as an agent or gateway.

- Spelling: When referring to the OpenTelemetry Collector, always capitalize Collector.

- Use just “Collector” if you are using Collector as an adjective — for example, “Collector configuration”.

Contrib

core - Instrumentation Libraries + Collector

non-core - vendor Exporter

- Several Instrumentation Libraries and the Collector offer a set of core capabilities

- as well as a dedicated contrib repository for non-core capabilities including vendor Exporters.

Context propagation

- Allows all Data sources to share an underlying context mechanism for storing state and accessing data across the lifespan of a Transaction.

DAG

- Directed acyclic graph

Data source

- See Signal

Dimension

- A term used specifically by Metrics. See Attribute.

Distributed tracing

- Tracks the progression of a single Request, called a Trace, as it is handled by Services that make up an Application.

- A Distributed trace transverses process, network and security boundaries.

Distribution

- A distribution is a wrapper around an upstream OpenTelemetry repository with some customizations.

Event

- Something that happened where representation depends on the Data source. For example, Spans.

Exporter

- Provides functionality to emit telemetry to consumers. Exporters can be push- or pull-based.

Field

Log Records

- A term used specifically by Log Records.

- Metadata can be added through defined fields, including Attributes and Resource.

- Other fields may also be considered Metadata, including severity and trace information. See the field spec.

gRPC

- A high-performance, open source universal RPC framework.

Instrumented library

- Denotes the Library for which the telemetry signals (Traces, Metrics, Logs) are gathered.

Instrumentation library

- Denotes the Library that provides the instrumentation for a given Instrumented library.

- Instrumented library and Instrumentation library can be the same Library if it has built-in OpenTelemetry instrumentation.

Label

- A term used specifically by Metrics. See Metadata.

Library

- A language-specific collection of behavior invoked by an interface.

Log

- Sometimes used to refer to a collection of Log records.

- Can be ambiguous since people also sometimes use Log to refer to a single Log record.

- Where ambiguity is possible, use additional qualifiers, for example, Log record.

Log record

- A recording of an Event.

- record includes a timestamp indicating when the Event happened as well as other data that describes what happened, where it happened, and so on.

Metadata

Metrics - Labels

Logs - Fields

- A key-value pair, for example foo=”bar”, added to an entity producing telemetry.

- OpenTelemetry calls these pairs Attributes.

- In addition, Metrics have Dimensions an Labels, while Logs have Fields.

Metric

time series

- Records a data point, either raw measurements or predefined aggregation, as time series with Metadata.

OC

- Short form for OpenCensus.

OT

- Short form for OpenTracing.

OTel

- Short form for OpenTelemetry.

- Spelling: Write OTel, not OTEL.

OpAMP

- Abbreviation for the Open Agent Management Protocol.

- Spelling - Write OpAMP, not OPAMP nor opamp in descriptions or instructions.

OpenCensus

- Precursor to OpenTelemetry.

OpenTracing

- Precursor to OpenTelemetry.

OpenTelemetry

- Formed through a merger of the OpenTracing and OpenCensus projects

- a collection of APIs, SDKs, and tools that you can use to instrument, generate, collect, and export telemetry data such as metrics, logs, and traces.

- Spelling: OpenTelemetry should always be a single unhyphenated word and capitalized as shown.

OTelCol

- Short form for OpenTelemetry Collector.

OTEP

- An acronym for OpenTelemetry Enhancement Proposal

- Spelling: Write “OTEPs” as plural form. Don’t write OTep or otep in descriptions.

OTLP

- Short for OpenTelemetry Protocol.

Propagators

- Used to serialize and deserialize specific parts of telemetry data such as span context and Baggage in Spans.

Proto

OpenTelemetry protocol (OTLP) specification and Protobuf definitions

- Language independent interface types.

Receiver

- The term used by the Collector to define how telemetry data is received. Receivers can be push- or pull-based.

Resource

- Captures information about the entity producing telemetry as Attributes.

- a process producing telemetry that is running in a container on Kubernetes

- has a process name, a pod name, a namespace, and possibly a deployment name.

- All these attributes can be included in the Resource.

REST

- Short for Representational State Transfer

RPC

- Short for Remote Procedure Call

Sampling

- A mechanism to control the amount of data exported.

- Most commonly used with the Tracing Data Source.

SDK

- Short for Software Development Kit.

- Refers to a telemetry SDK that denotes a Library that implement the OpenTelemetry API.

Semantic conventions

- Defines standard names and values of Metadata in order to provide vendor-agnostic telemetry data.

Service

- A component of an Application.

- Multiple instances of a Service are typically deployed for high availability and scalability.

- A Service can be deployed in multiple locations.

Signal

- One of Traces, Metrics or Logs.

Span

- Represents a single operation within a Trace.

Span link

- A span link is a link between causally-related spans.

Specification

- Describes the cross-language requirements and expectations for all implementations.

Status

- The result of the operation.

- Typically used to indicate whether an error occurred.

Tag

- See Metadata

Trace

- A DAG of Spans, where the edges between Spans are defined as parent-child relationship.

Tracer

- Responsible for creating Spans.

Transaction

- See Distributed Tracing.

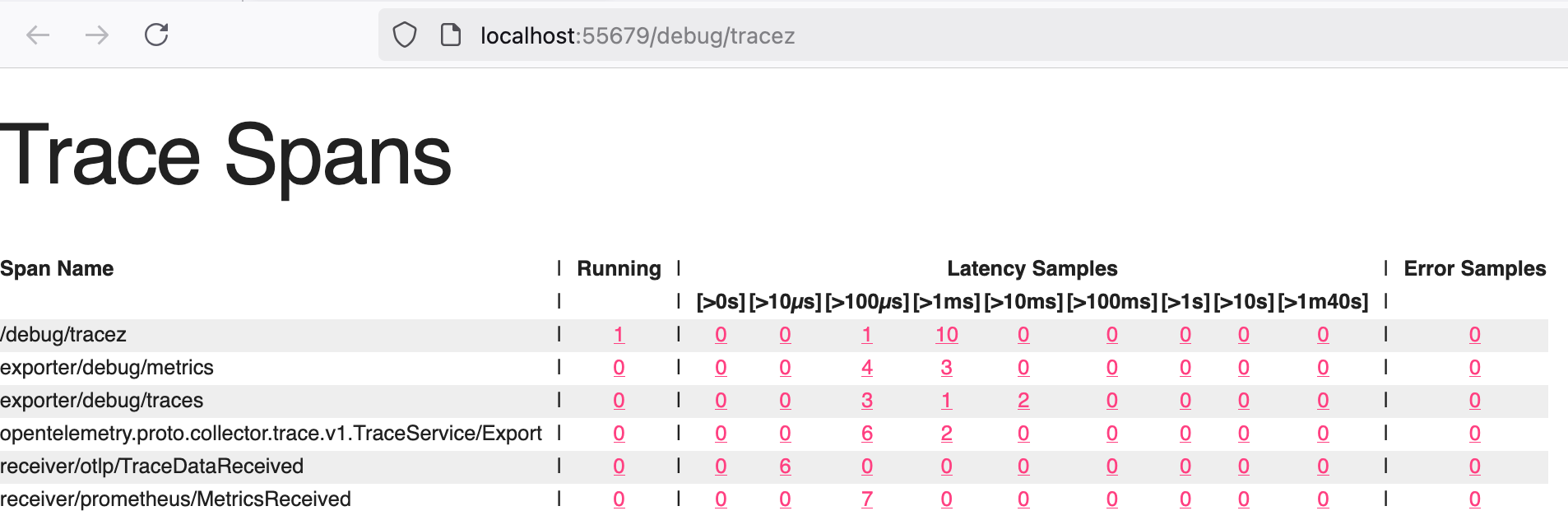

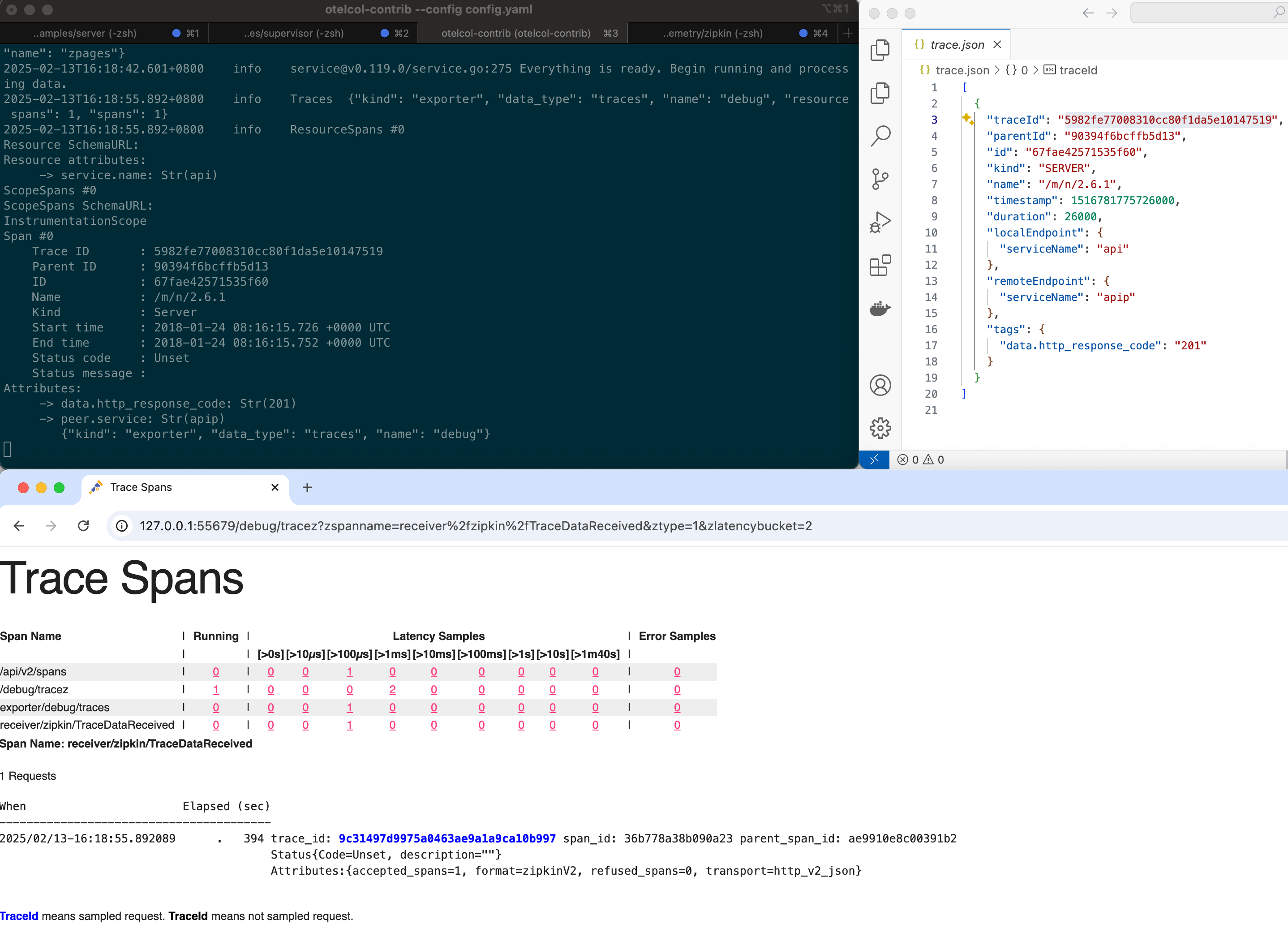

zPages

development + debug

- An in-process alternative to external exporters.

- When included, they collect and aggregate tracing and metrics information in the background

- this data is served on web pages when requested.

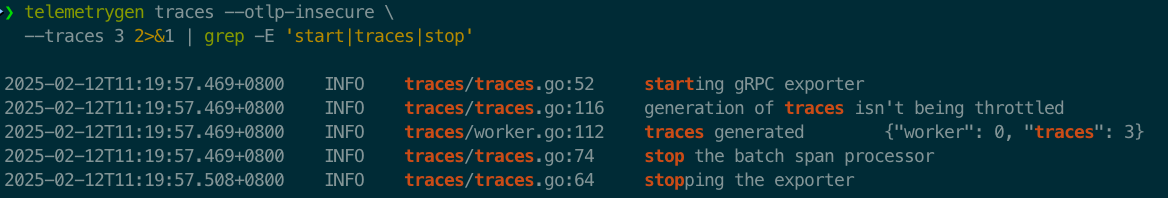

演示

TBD

Language APIs & SDKs

zero-code solutions - Go, .NET, PHP, Python, Java and JavaScript

- OpenTelemetry code instrumentation is supported for the languages listed in the Statuses and Releases table below.

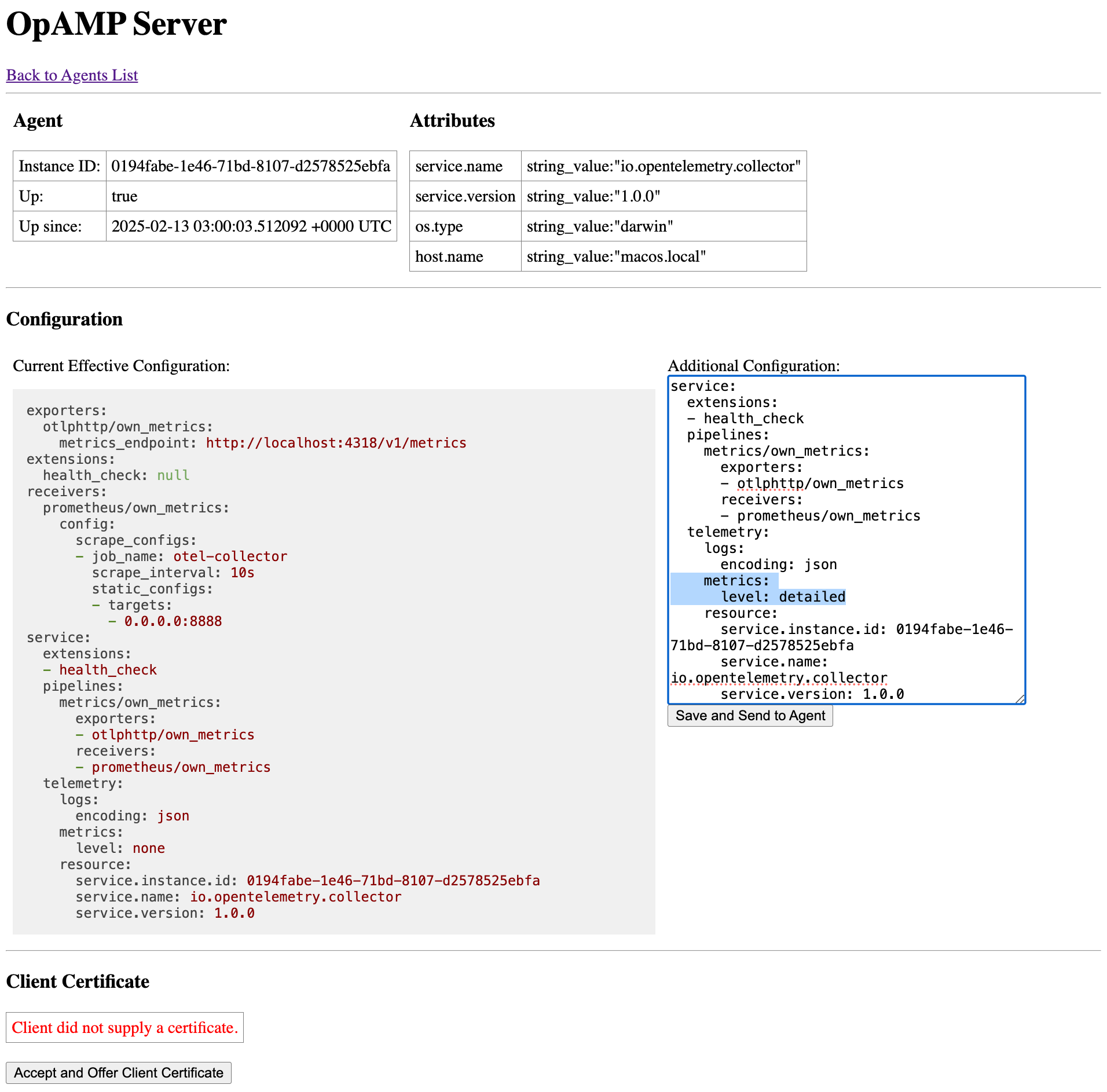

- Unofficial implementations for other languages are available as well. You can find them in the registry.