MCP - Introduction

Background

- Generative AI applications are a great step forward as they often let the user interact with the app using natural language prompts.

- However, as more time and resources are invested in such apps, you want to make sure you can easily integrate functionalities and resources in such a way that it’s easy to extend, that your app can cater to more than one model being used, and handle various model intricacies.

- In short, building Gen AI apps is easy to begin with, but as they grow and become more complex, you need to start defining an architecture and will likely need to rely on a standard to ensure your apps are built in a consistent way.

- This is where MCP comes in to organize things and provide a standard.

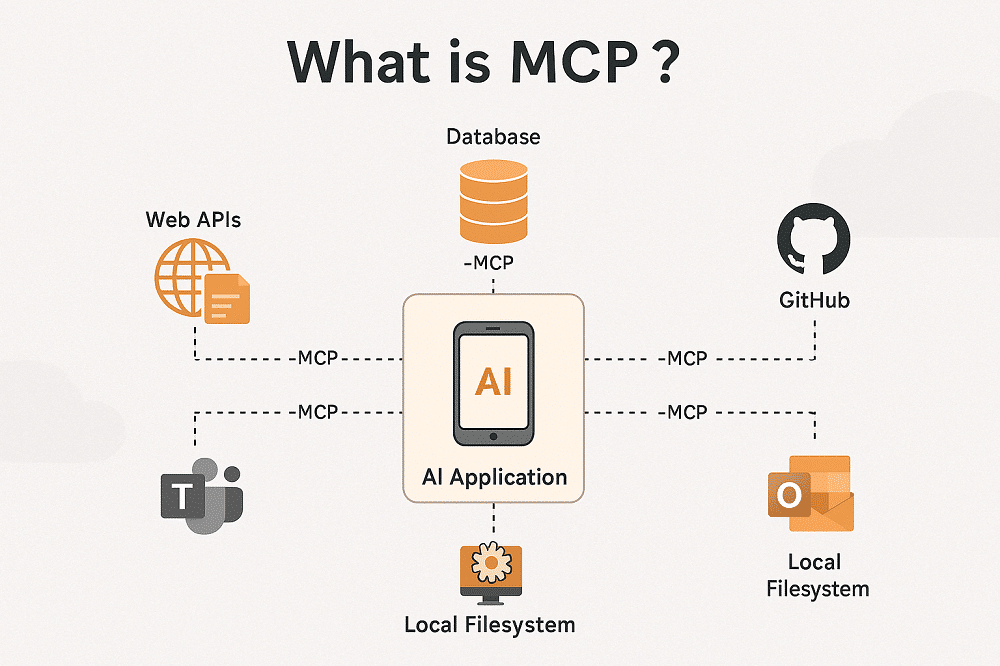

What Is the Model Context Protocol (MCP)?

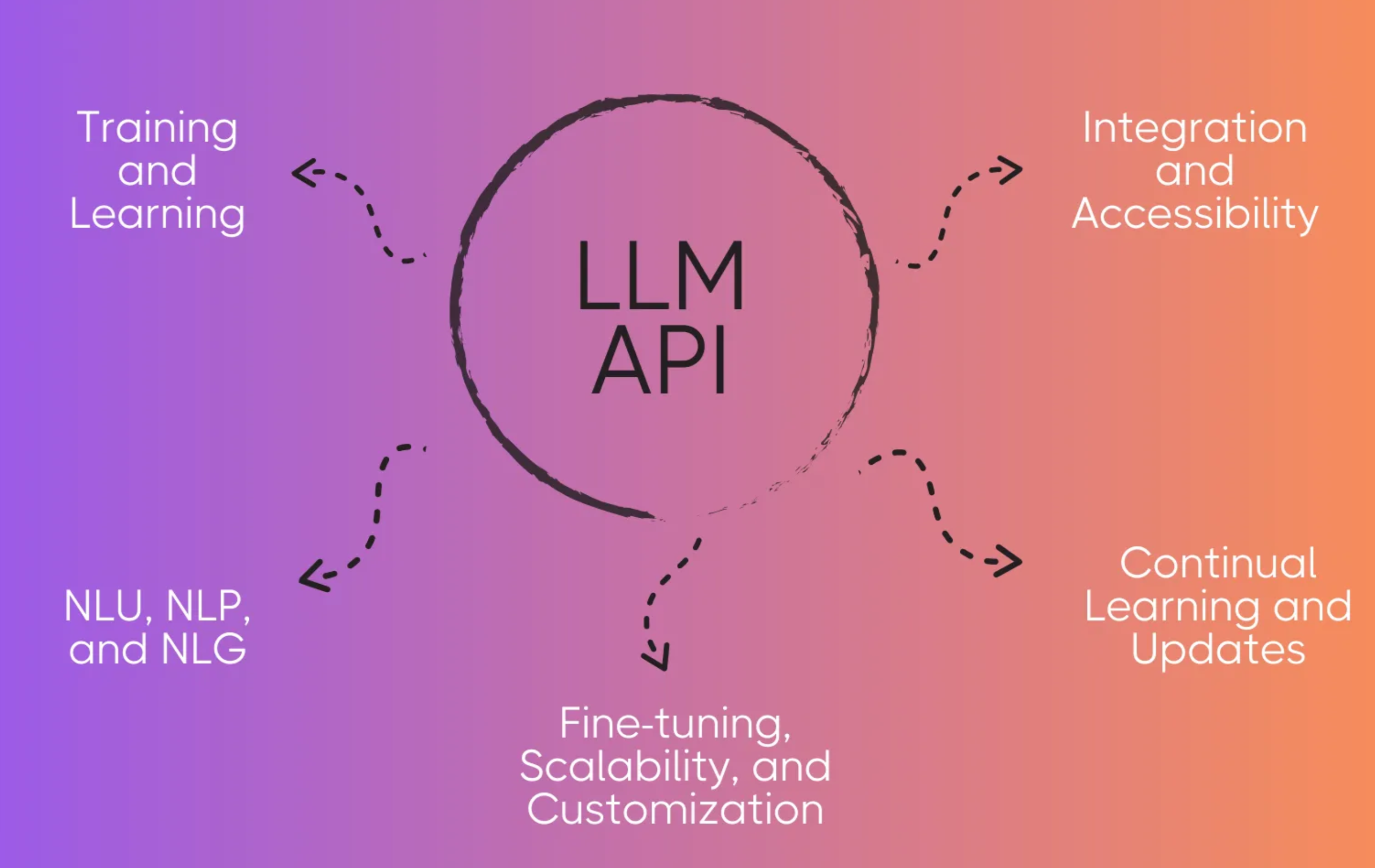

- The Model Context Protocol (MCP) is an open, standardized interface that allows Large Language Models (LLMs) to interact seamlessly with external tools, APIs, and data sources.

- It provides a consistent architecture to enhance AI model functionality beyond their training data, enabling smarter, scalable, and more responsive AI systems.

Why Standardization in AI Matters

- As generative AI applications become more complex, it’s essential to adopt standards that ensure scalability, extensibility, maintainability, and avoiding vendor lock-in. MCP addresses these needs by:

- Unifying model-tool integrations

- Reducing brittle, one-off custom solutions

- Allowing multiple models from different vendors to coexist within one ecosystem

- Note: While MCP bills itself as an open standard

- there are no plans to standardize MCP through any existing standards bodies such as IEEE, IETF, W3C, ISO, or any other standards body.

Learning Objectives

- Define Model Context Protocol (MCP) and its use cases

- Understand how MCP standardizes model-to-tool communication

- Identify the core components of MCP architecture

- Explore real-world applications of MCP in enterprise and development contexts

Why the Model Context Protocol (MCP) Is a Game-Changer

MCP Solves Fragmentation in AI Interactions

Before MCP, integrating models with tools required:

- Custom code per tool-model pair

- Non-standard APIs for each vendor

- Frequent breaks due to updates

- Poor scalability with more tools

Benefits of MCP Standardization

| Benefit | Description |

|---|---|

| Interoperability | LLMs work seamlessly with tools across different vendors |

| Consistency | Uniform behavior across platforms and tools |

| Reusability | Tools built once can be used across projects and systems |

| Accelerated Development | Reduce dev time by using standardized, plug-and-play interfaces |

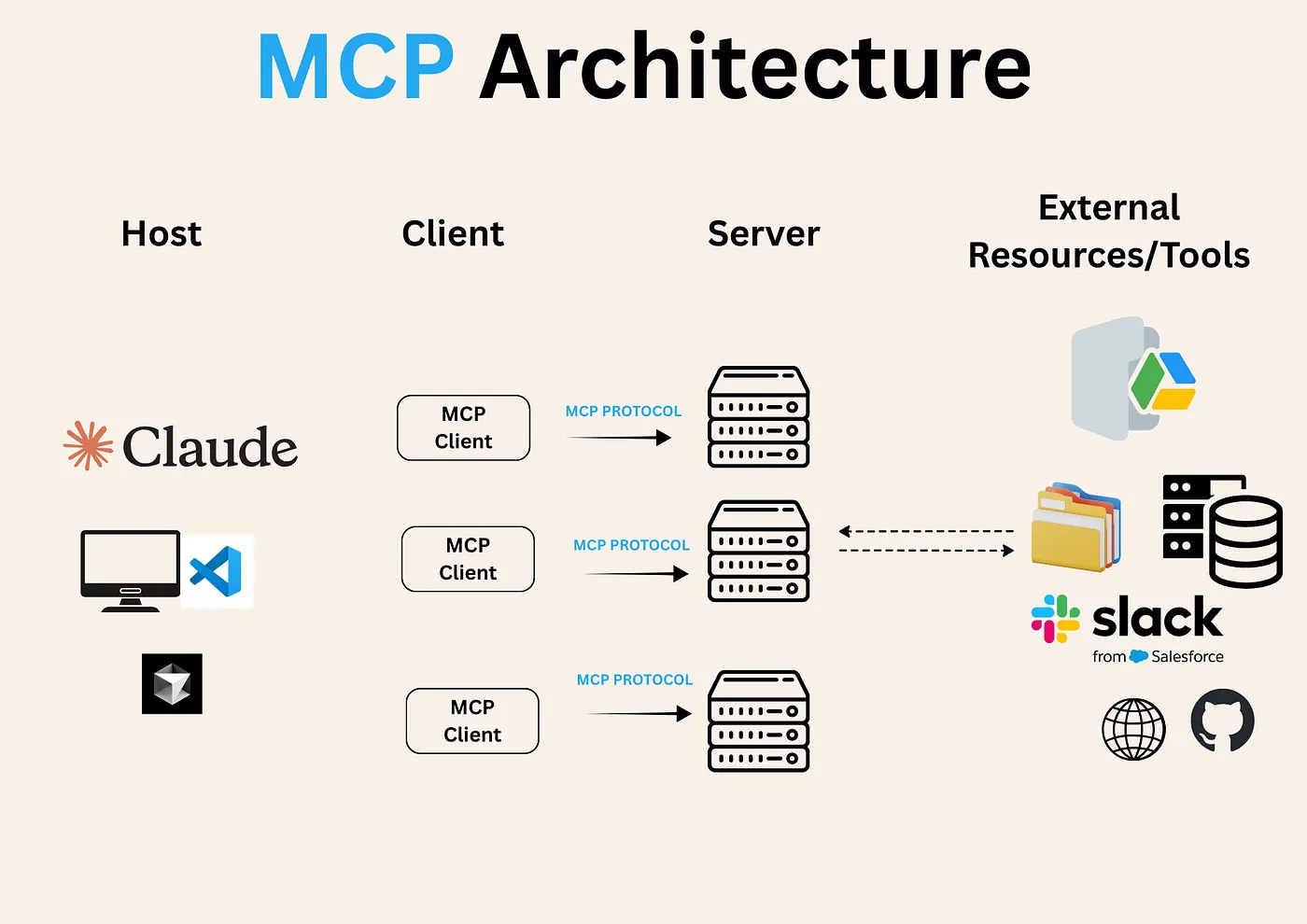

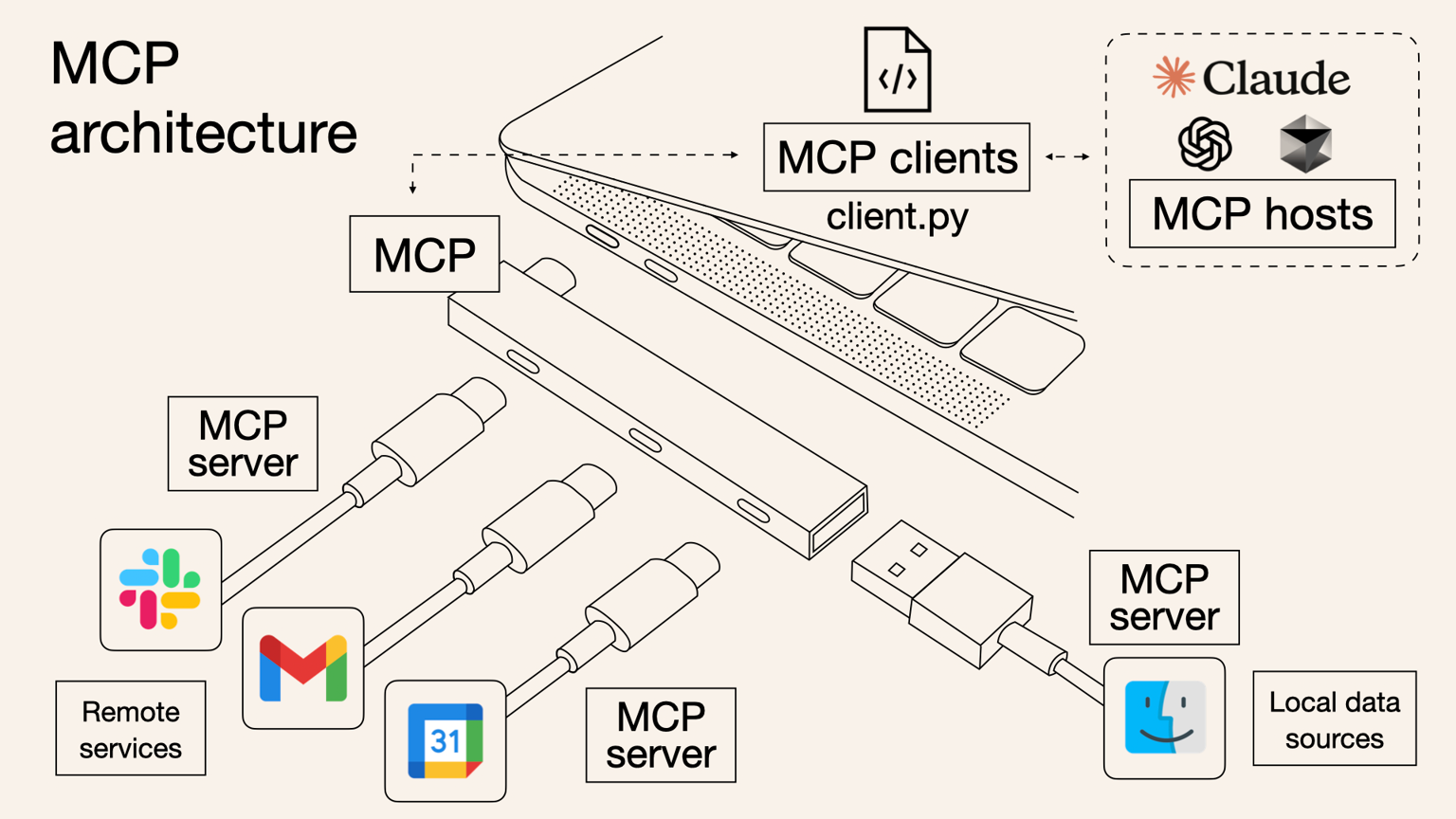

High-Level MCP Architecture Overview

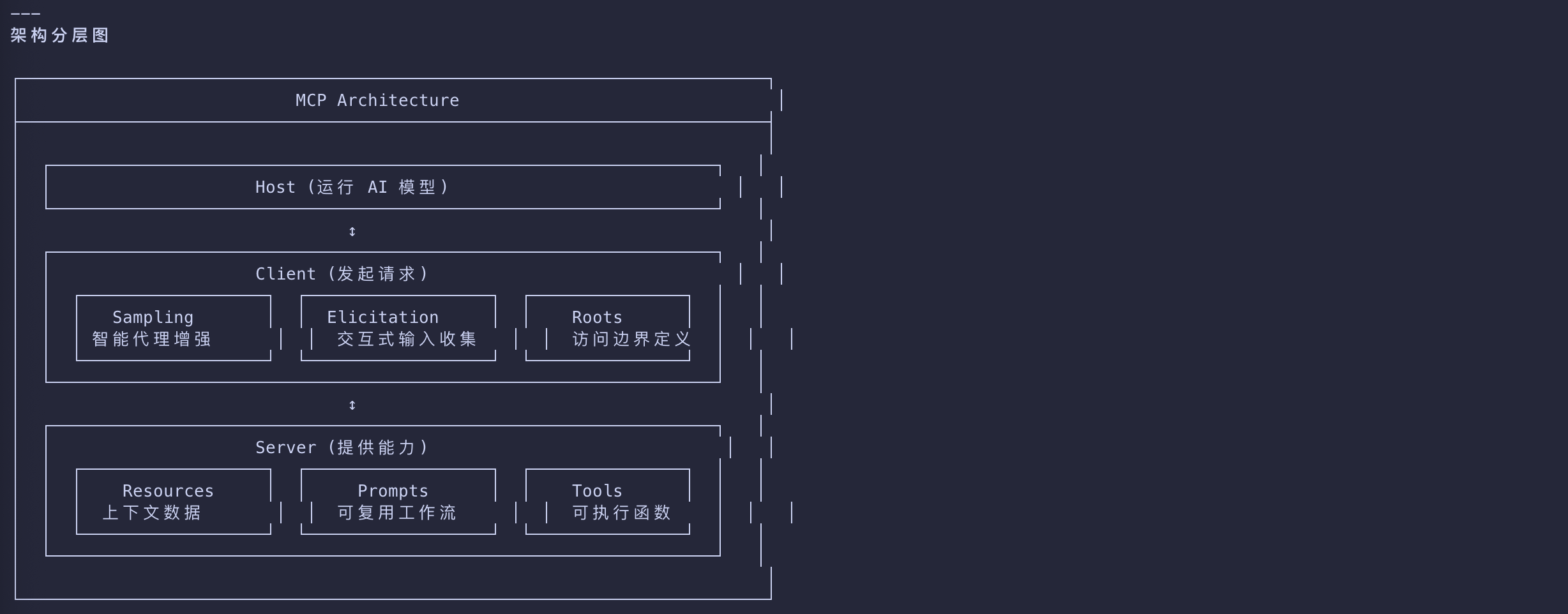

MCP follows a client-server model, where:

| Key | Desc |

|---|---|

| MCP Hosts | run the AI models |

| MCP Clients | initiate requests |

| MCP Servers | serve context, tools, and capabilities |

Host

- 职责:承载和执行 LLM 推理引擎,管理模型访问与凭证

- 控制域:模型选择、权限策略、用户授权流、会话协调

- 典型实例:Claude Desktop、Cursor IDE、自定义 AI 应用

Client

- 职责:代表 Host 发起与 Server 的连接,执行协议握手与能力协商

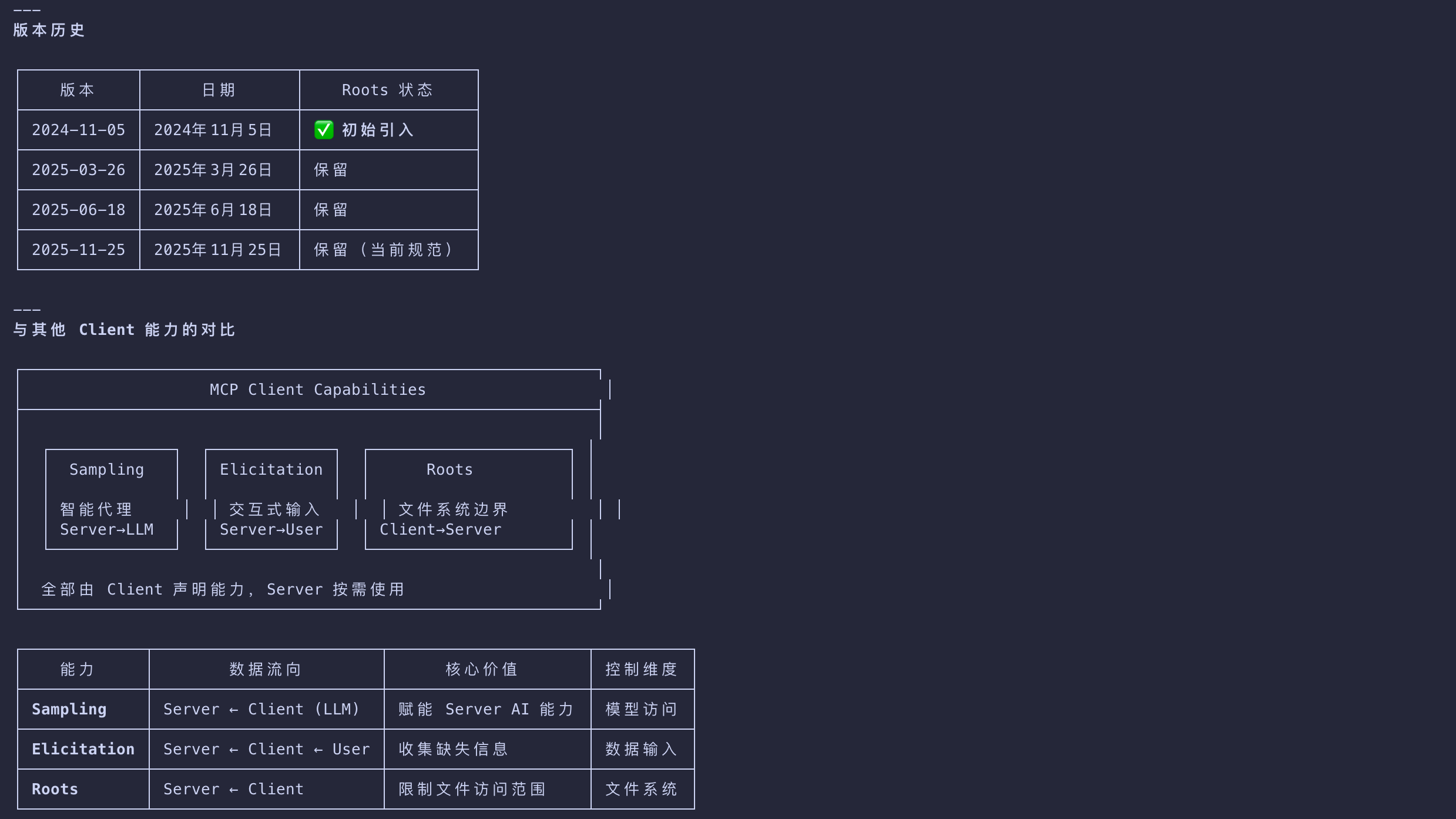

- 暴露能力:Sampling(LLM 生成)、Roots(文件系统边界)

- 通信模式:1:1 映射到单个 Server,通过 stdio 或 SSE 传输

Server

- 职责:暴露外部系统/数据源的结构化接口

- 核心原语:Resources(只读上下文)、Tools(可执行函数)、Prompts(可复用指令模板)

- 设计模式:单一职责原则,每个 Server 封装一个外部系统

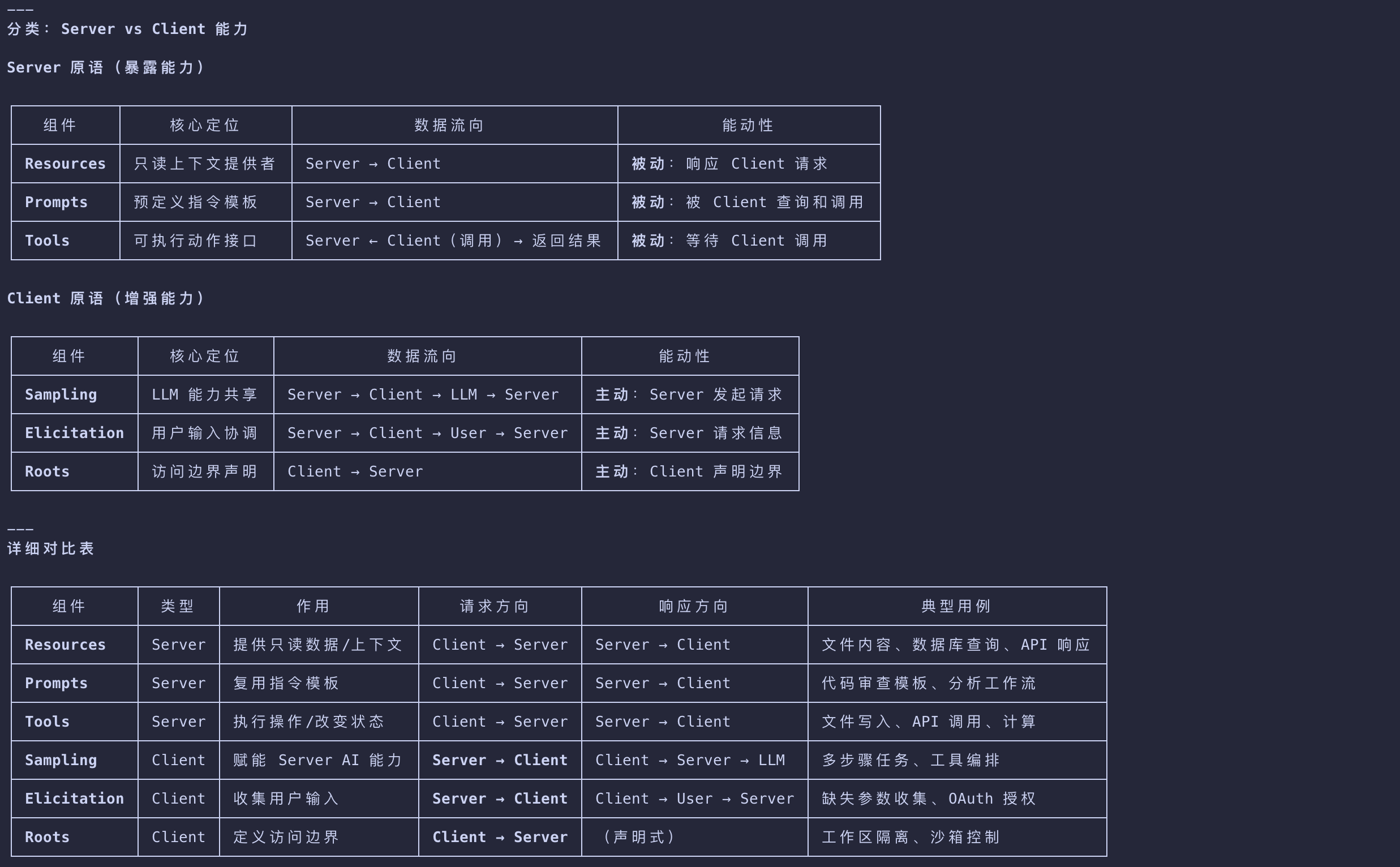

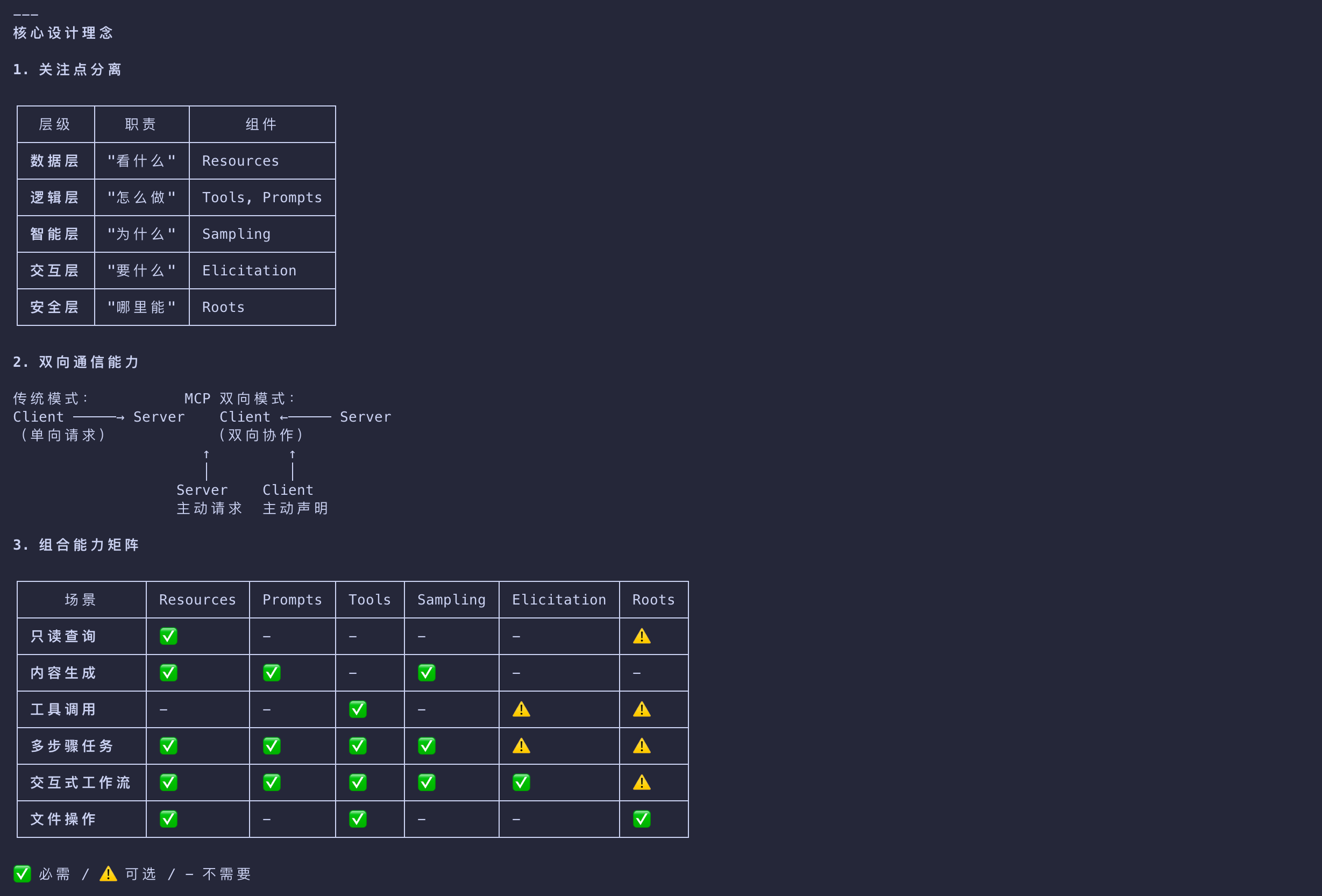

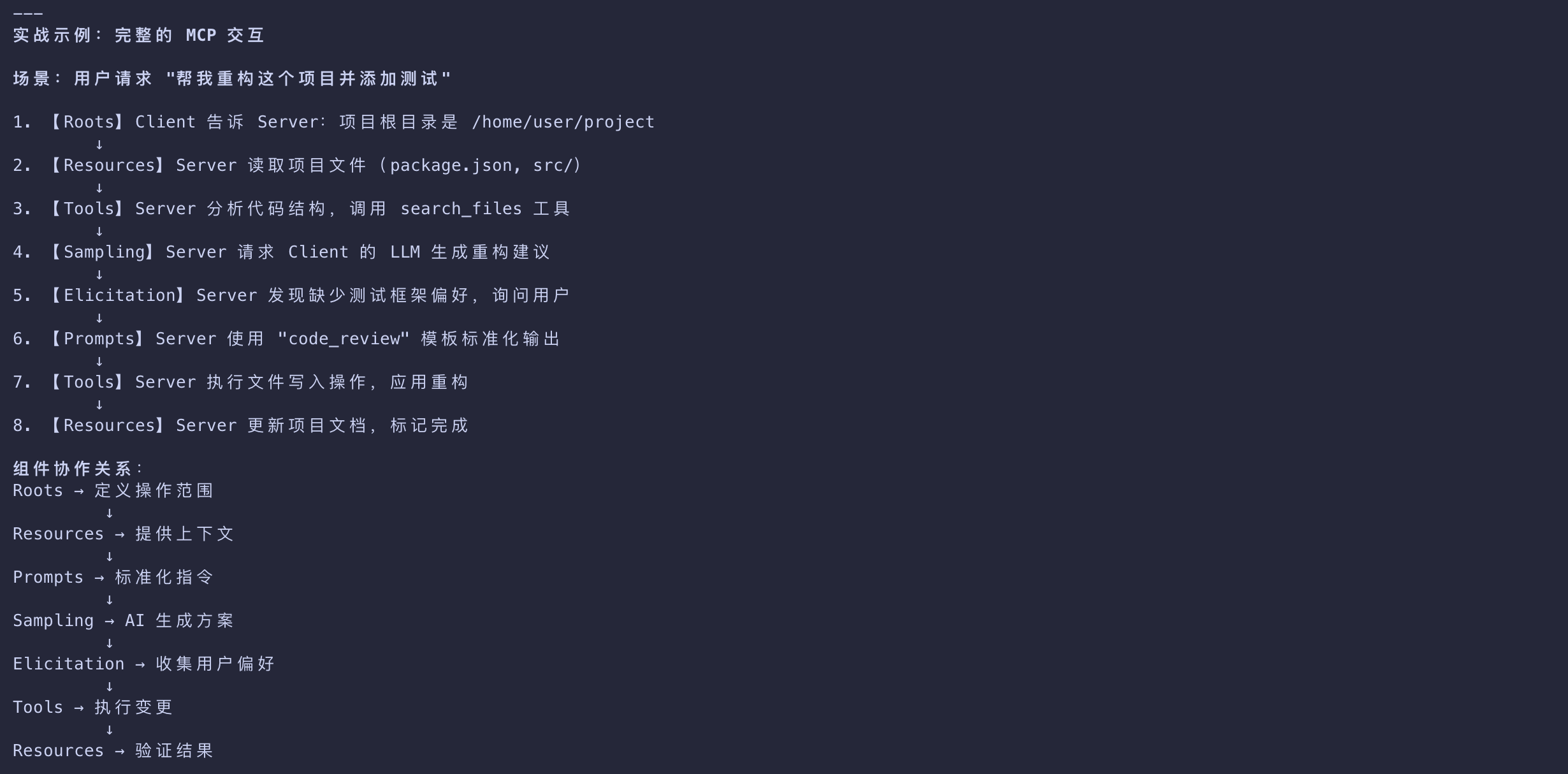

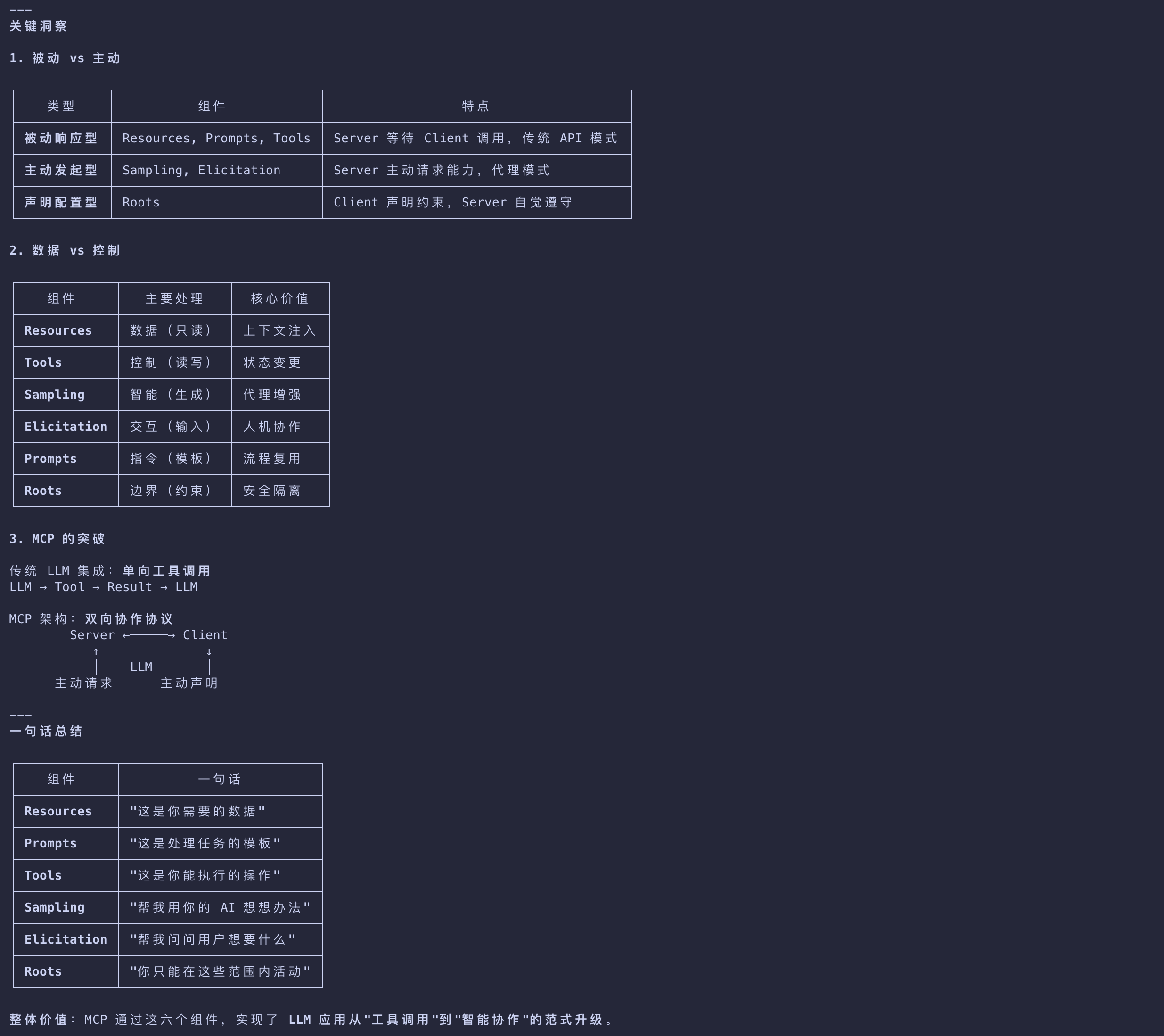

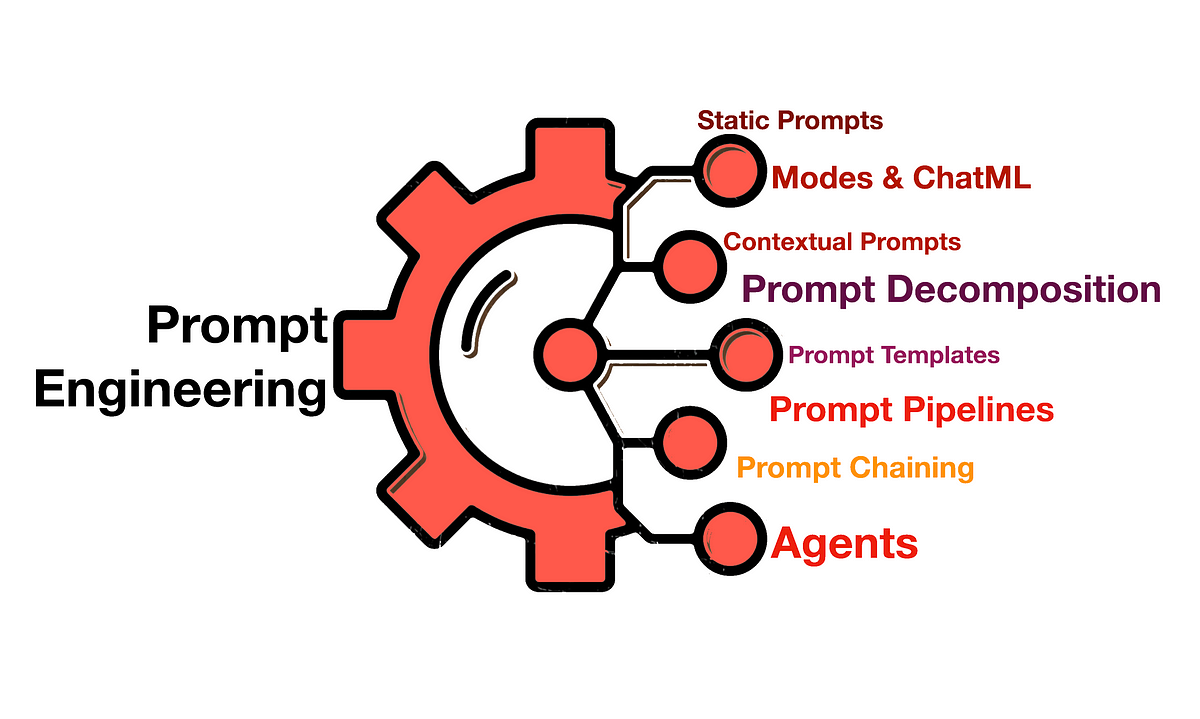

Key Components

| Components | Desc |

|---|---|

| Resources | Static or dynamic data for models |

| Prompts | Predefined workflows for guided generation |

| Tools | Executable functions like search, calculations |

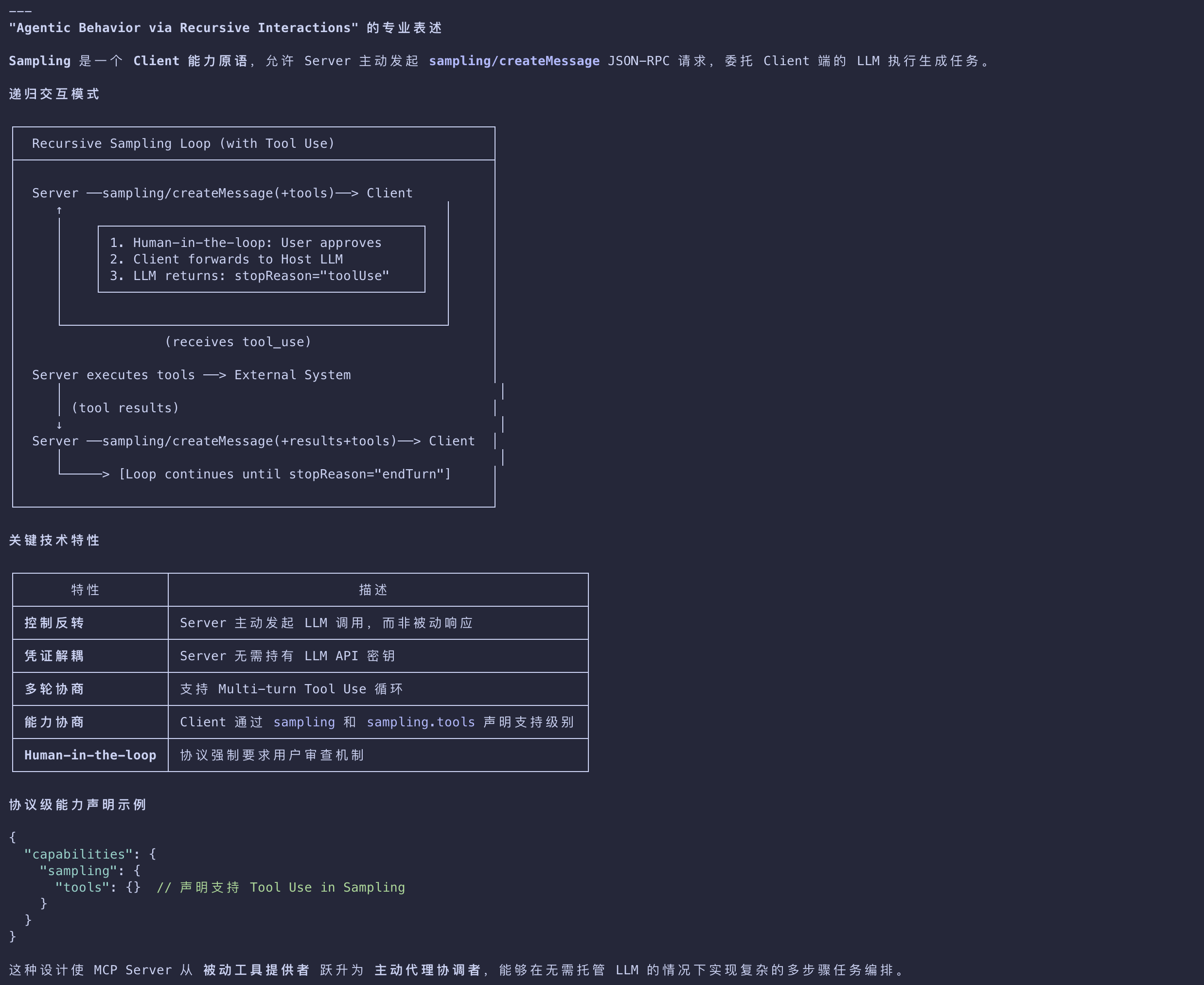

| Sampling | Agentic behavior via recursive interactions |

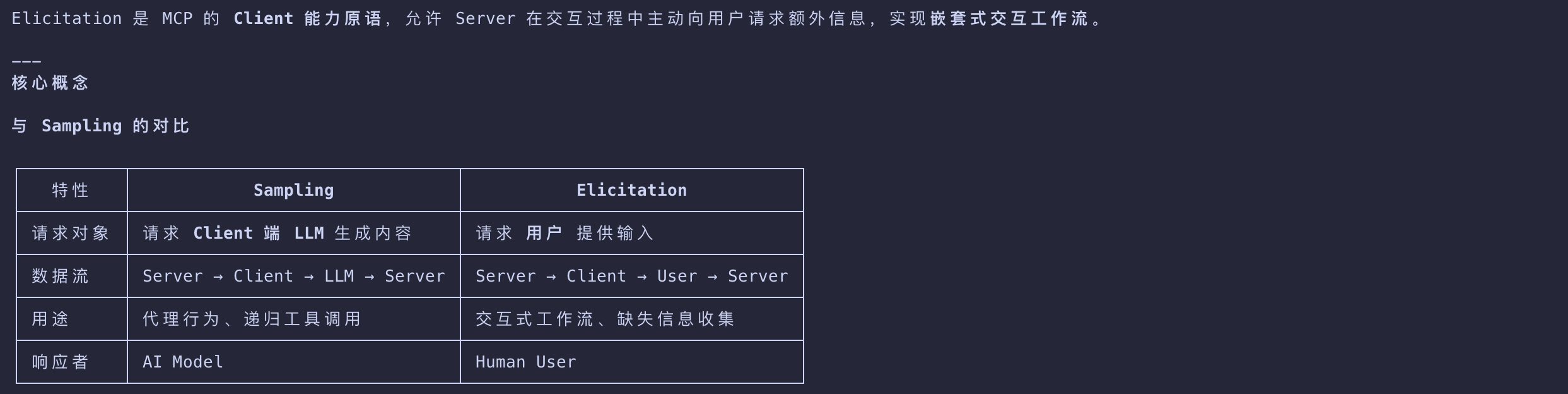

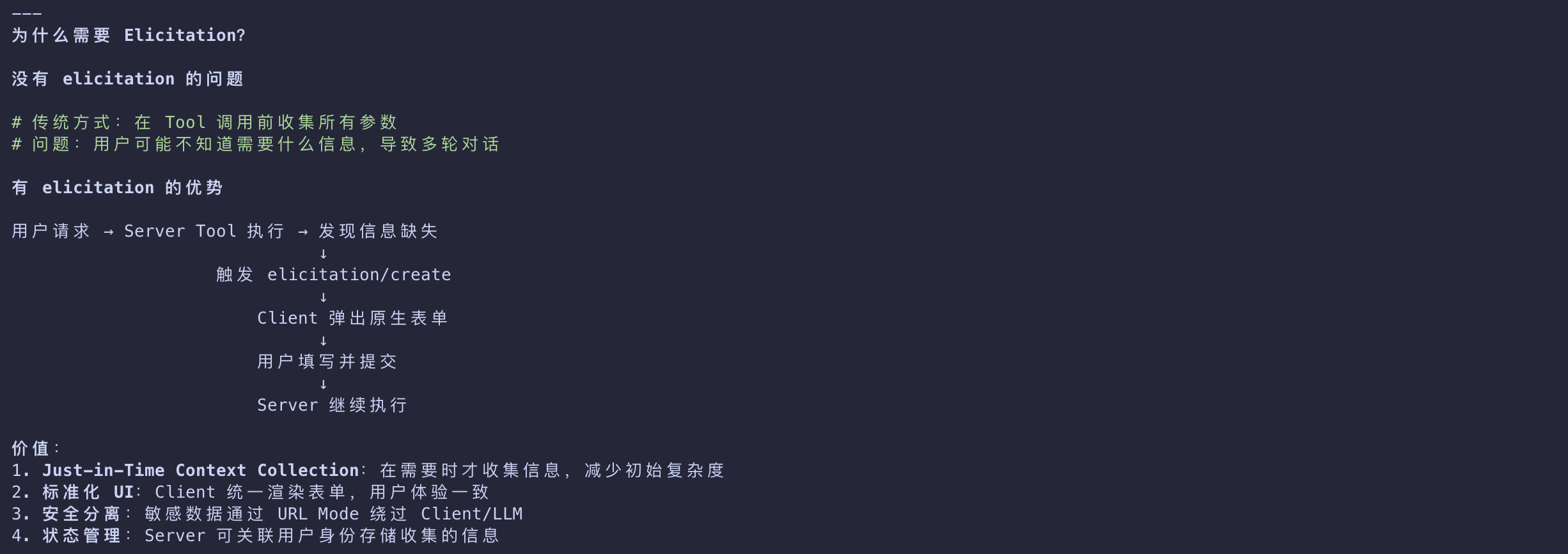

| Elicitation | Server-initiated requests for user input |

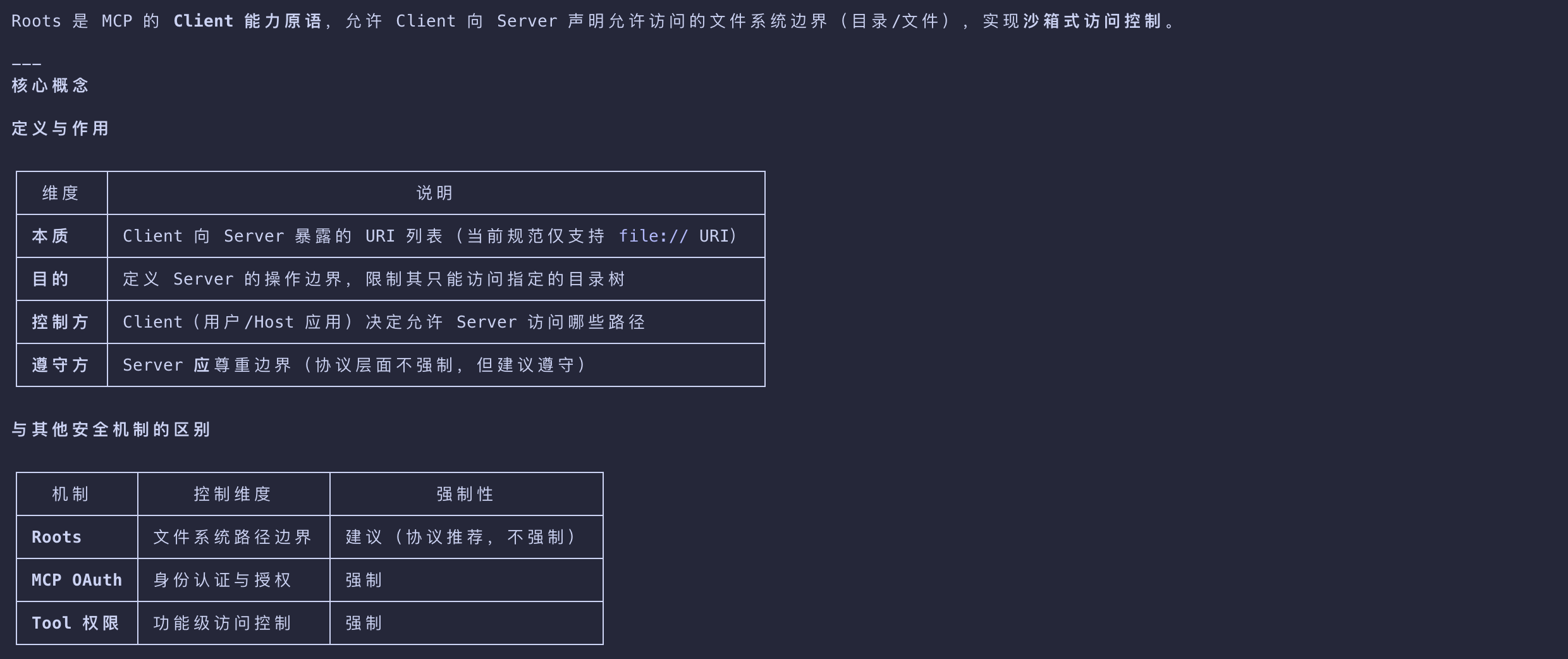

| Roots | Filesystem boundaries for server access control |

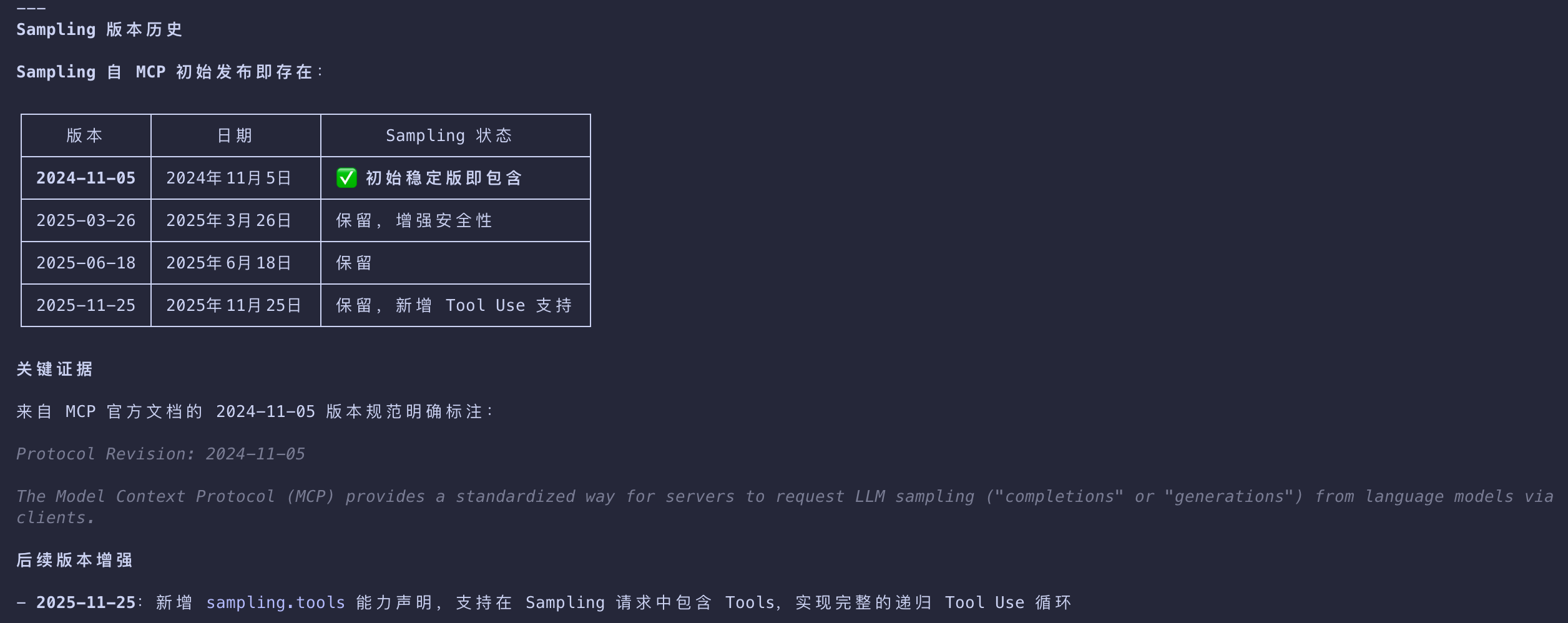

Sampling

Agentic behavior via recursive interactions

Elicitation

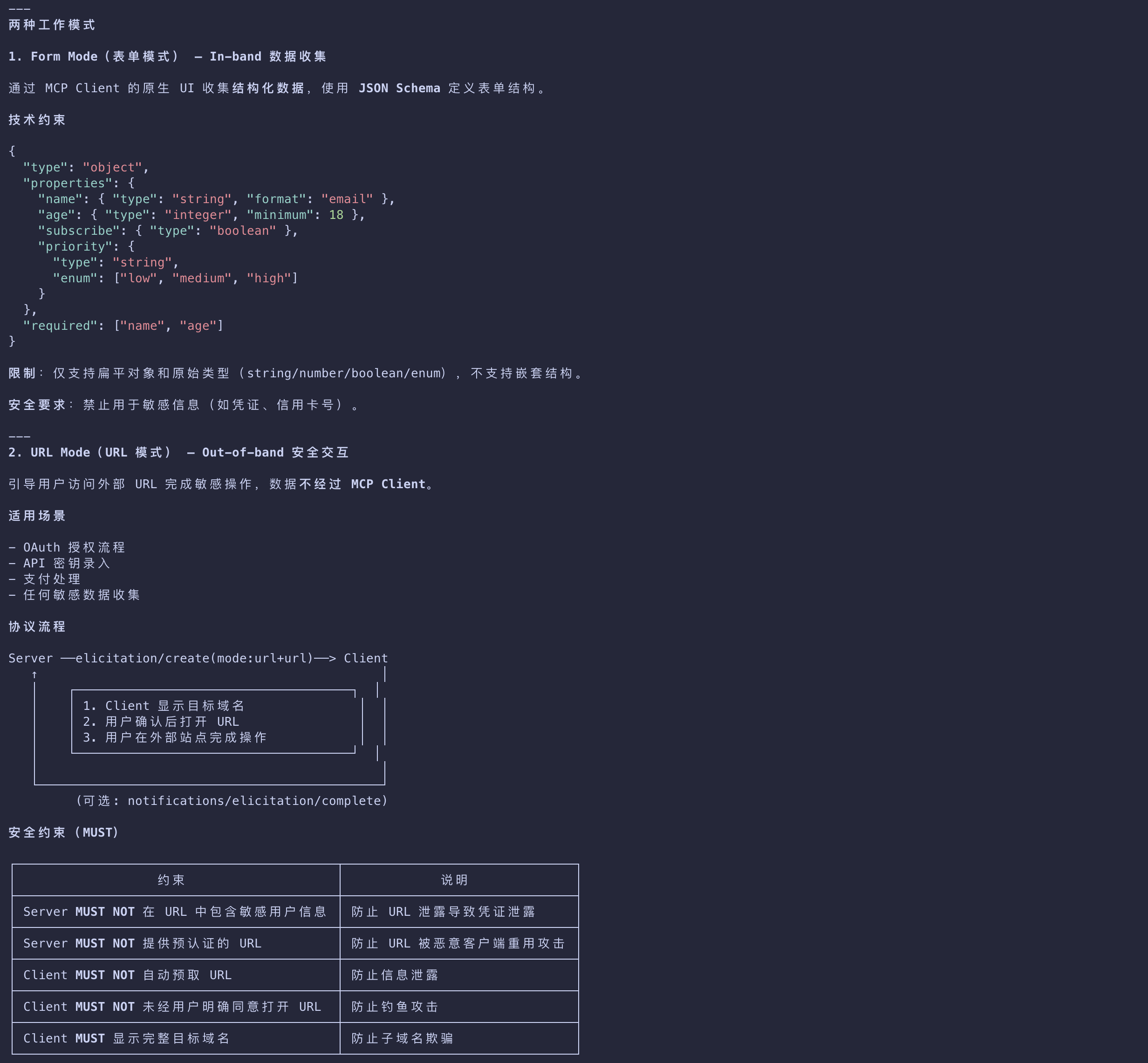

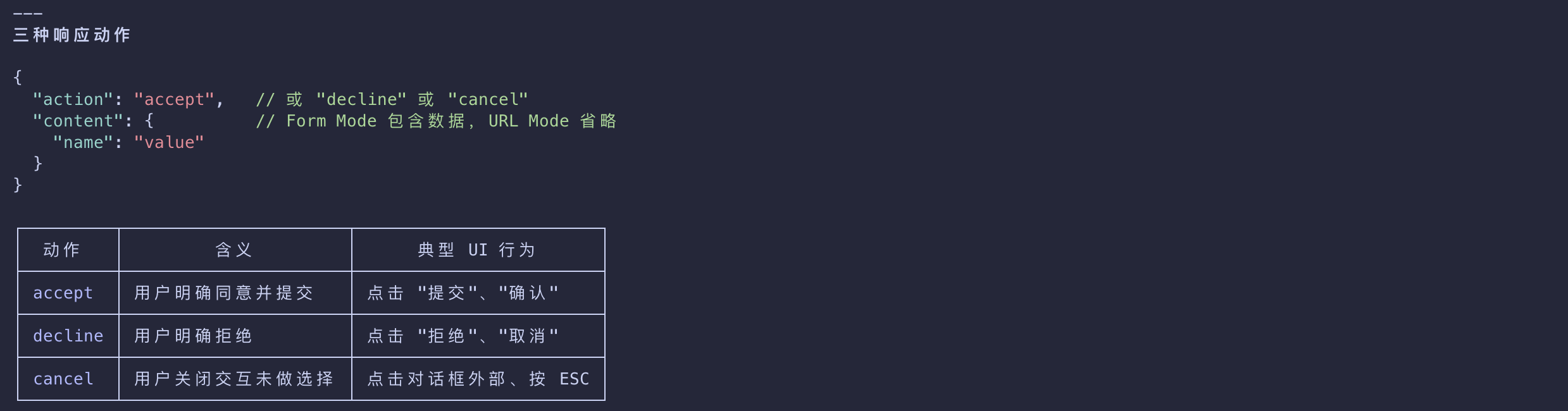

Server-initiated requests for user input - Server 主动请求用户输入

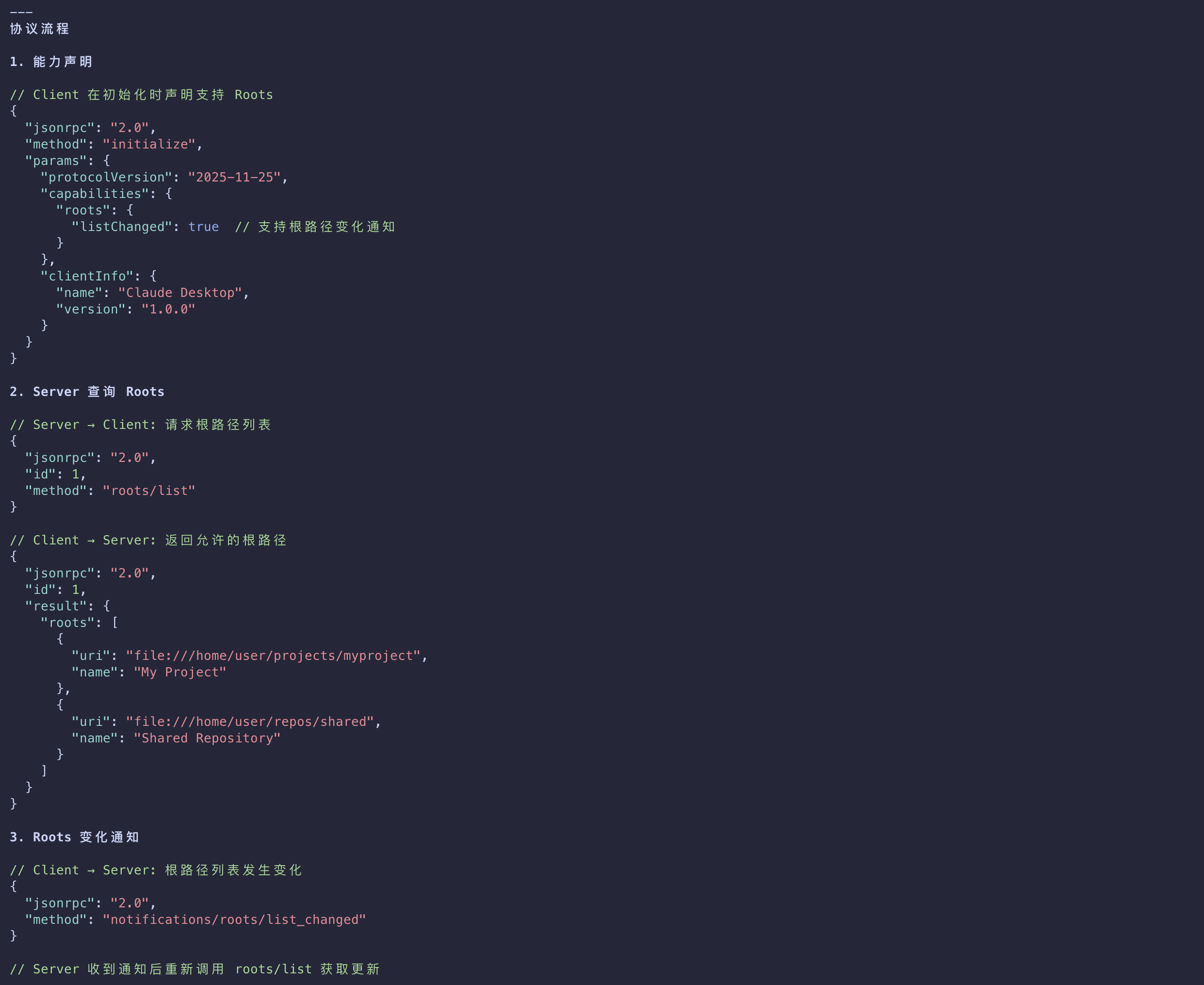

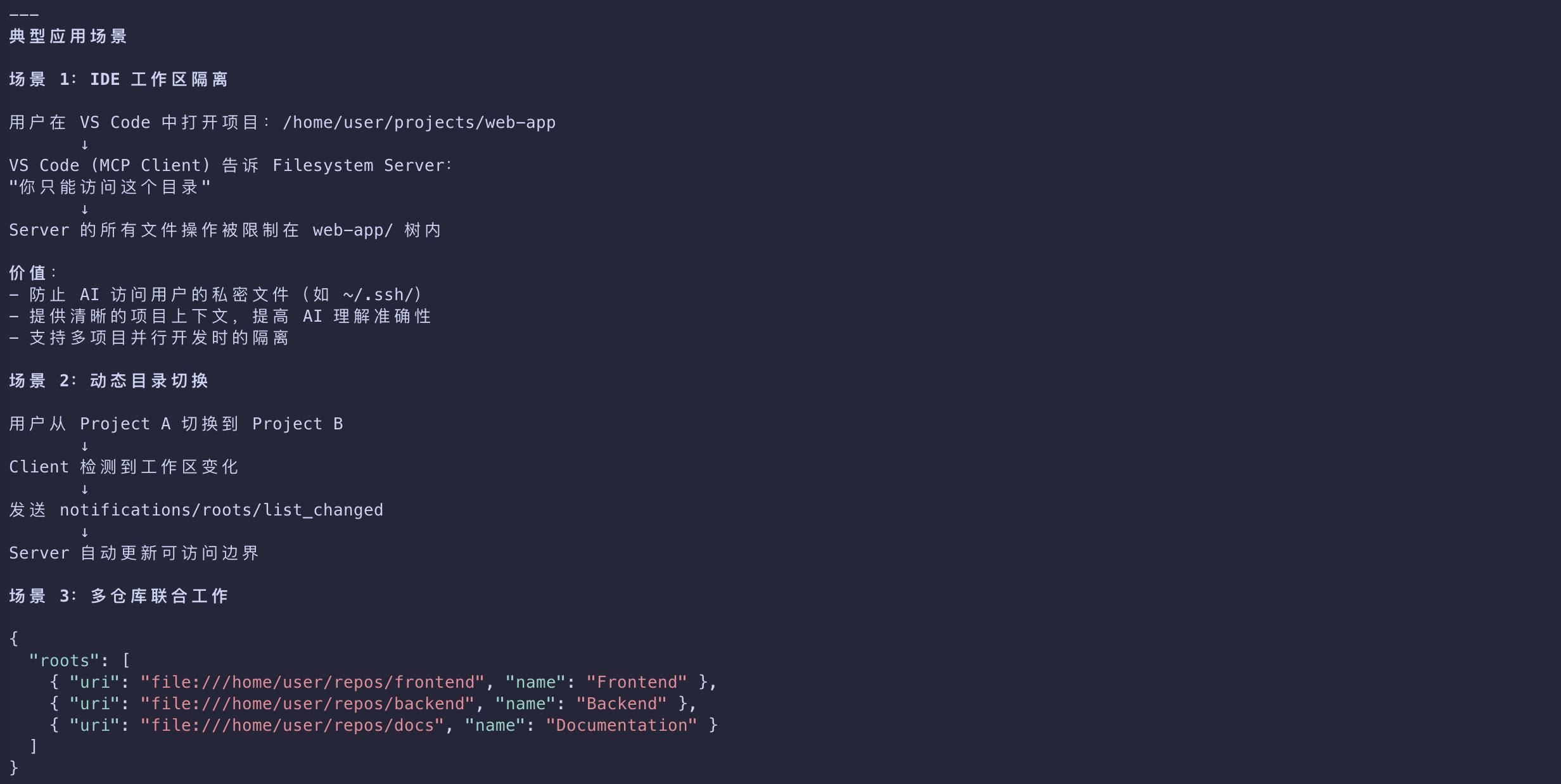

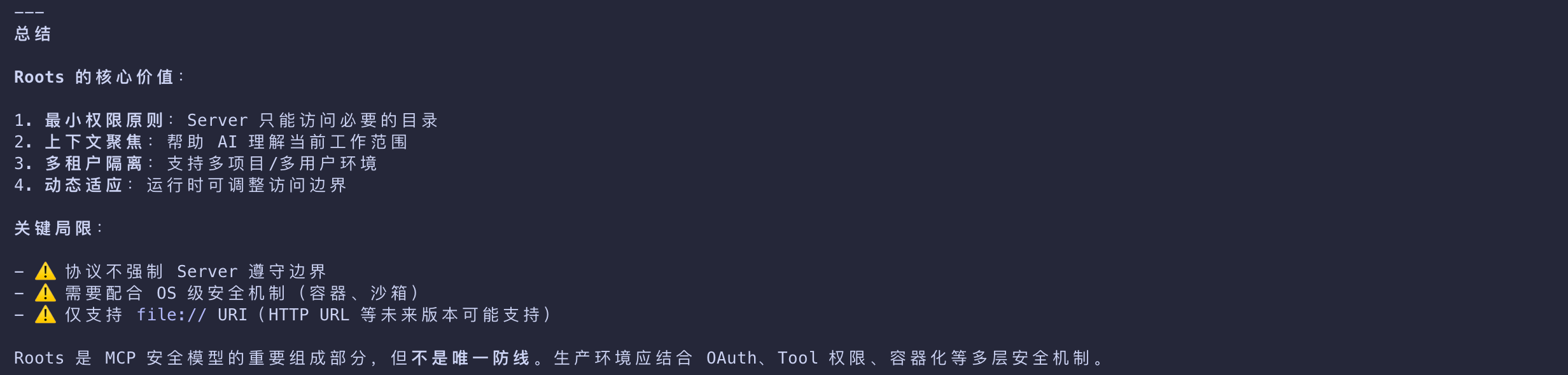

Roots

Filesystem boundaries for server access control

Summary

Protocol Architecture

MCP uses a two-layer architecture

| Layer | Desc |

|---|---|

| Data Layer | JSON-RPC 2.0 based communication with lifecycle management and primitives |

| Transport Layer | STDIO (local) and Streamable HTTP with SSE (remote) communication channels |

How MCP Servers Work

How MCP Servers Work

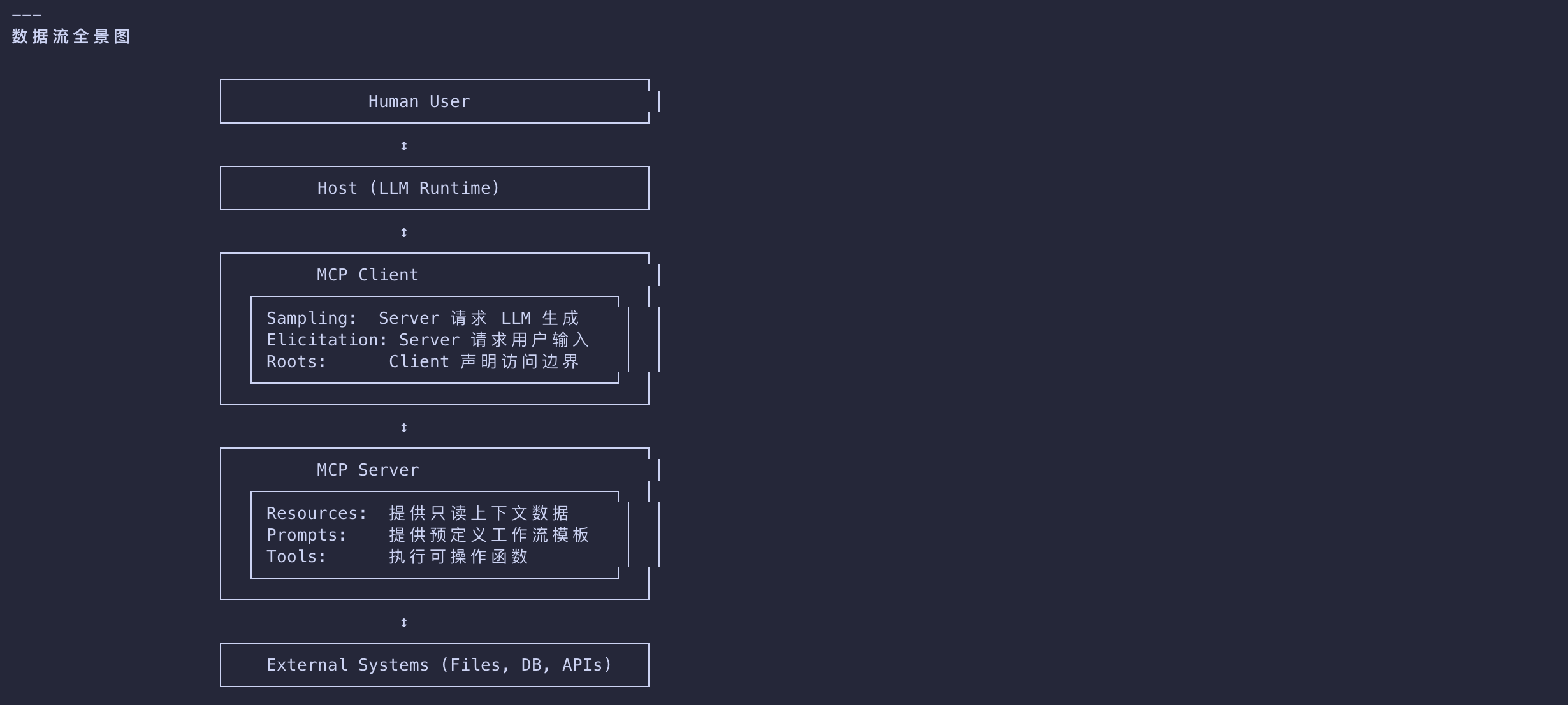

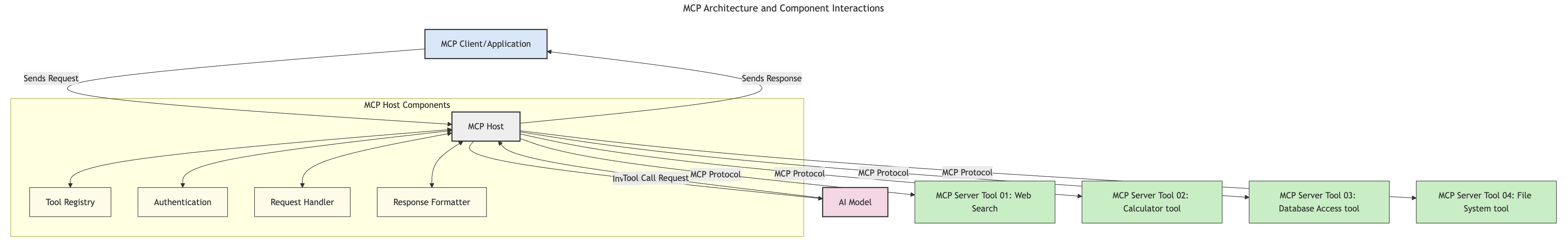

Request Flow

- A request is initiated by an end user or software acting on their behalf.

- The MCP Client sends the request to an MCP Host, which manages the AI Model runtime.

- The AI Model receives the user prompt and may request access to external tools or data via one or more tool calls.

- The MCP Host, not the model directly, communicates with the appropriate MCP Server(s) using the standardized protocol.

MCP Host Functionality

| Functionality | Desc |

|---|---|

| Tool Registry | Maintains a catalog of available tools and their capabilities. |

| Authentication | Verifies permissions for tool access. |

| Request Handler | Processes incoming tool requests from the model. |

| Response Formatter | Structures tool outputs in a format the model can understand. |

MCP Server Execution

- The MCP Host routes tool calls to one or more MCP Servers, each exposing specialized functions (e.g., search, calculations, database queries).

- The MCP Servers perform their respective operations and return results to the MCP Host in a consistent format.

- The MCP Host formats and relays these results to the AI Model.

Response Completion

- The AI Model incorporates the tool outputs into a final response.

- The MCP Host sends this response back to the MCP Client, which delivers it to the end user or calling software.

Real-World Use Cases for MCP

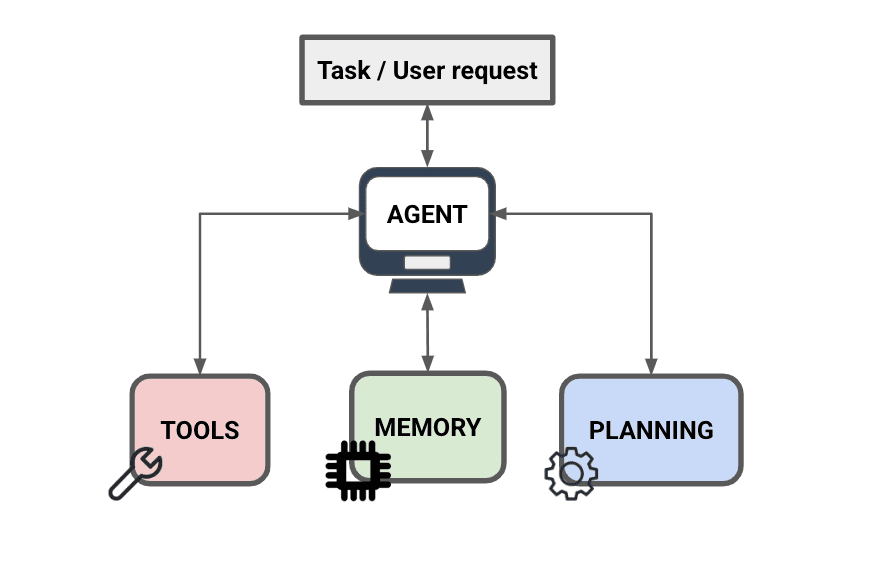

MCP enables a wide range of applications by extending AI capabilities:

| Application | Description |

|---|---|

| Enterprise Data Integration | Connect LLMs to databases, CRMs, or internal tools |

| Agentic AI Systems | Enable autonomous agents with tool access and decision-making workflows |

| Multi-modal Applications | Combine text, image, and audio tools within a single unified AI app |

| Real-time Data Integration | Bring live data into AI interactions for more accurate, current outputs |

MCP = Universal Standard for AI Interactions

- The MCP acts as a universal standard for AI interactions, much like how USB-C standardized physical connections for devices.

- In the world of AI, MCP provides a consistent interface

- allowing models (clients) to integrate seamlessly with external tools and data providers (servers).

- This eliminates the need for diverse, custom protocols for each API or data source.

- Under MCP, an MCP-compatible tool (referred to as an MCP server) follows a unified standard.

- These servers can list the tools or actions they offer and execute those actions when requested by an AI agent.

- AI agent platforms that support MCP are capable of discovering available tools from the servers and invoking them through this standard protocol.

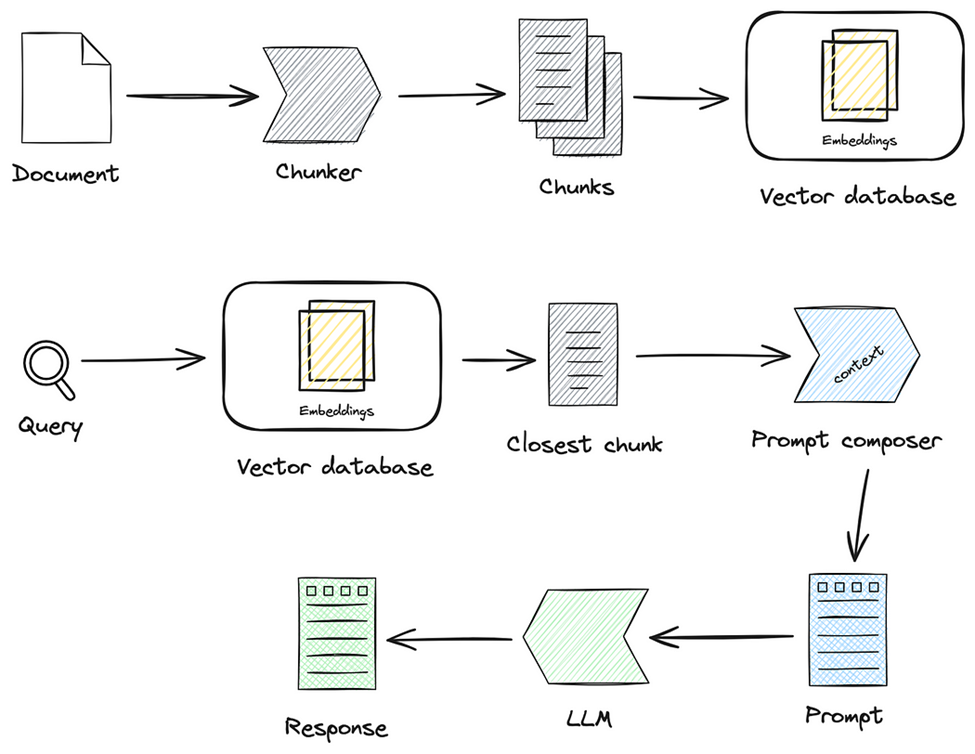

Facilitates access to knowledge

- Beyond offering tools, MCP also facilitates access to knowledge.

- It enables applications to provide context to large language models (LLMs) by linking them to various data sources.

- For instance, an MCP server might represent a company’s document repository, allowing agents to retrieve relevant information on demand.

- Another server could handle specific actions like sending emails or updating records.

- From the agent’s perspective, these are simply tools it can use—some tools return data (knowledge context), while others perform actions.

- MCP efficiently manages both.

- An agent connecting to an MCP server automatically learns the server’s available capabilities and accessible data through a standard format.

- This standardization enables dynamic tool availability.

- For example, adding a new MCP server to an agent’s system makes its functions immediately usable

- without requiring further customization of the agent’s instructions.

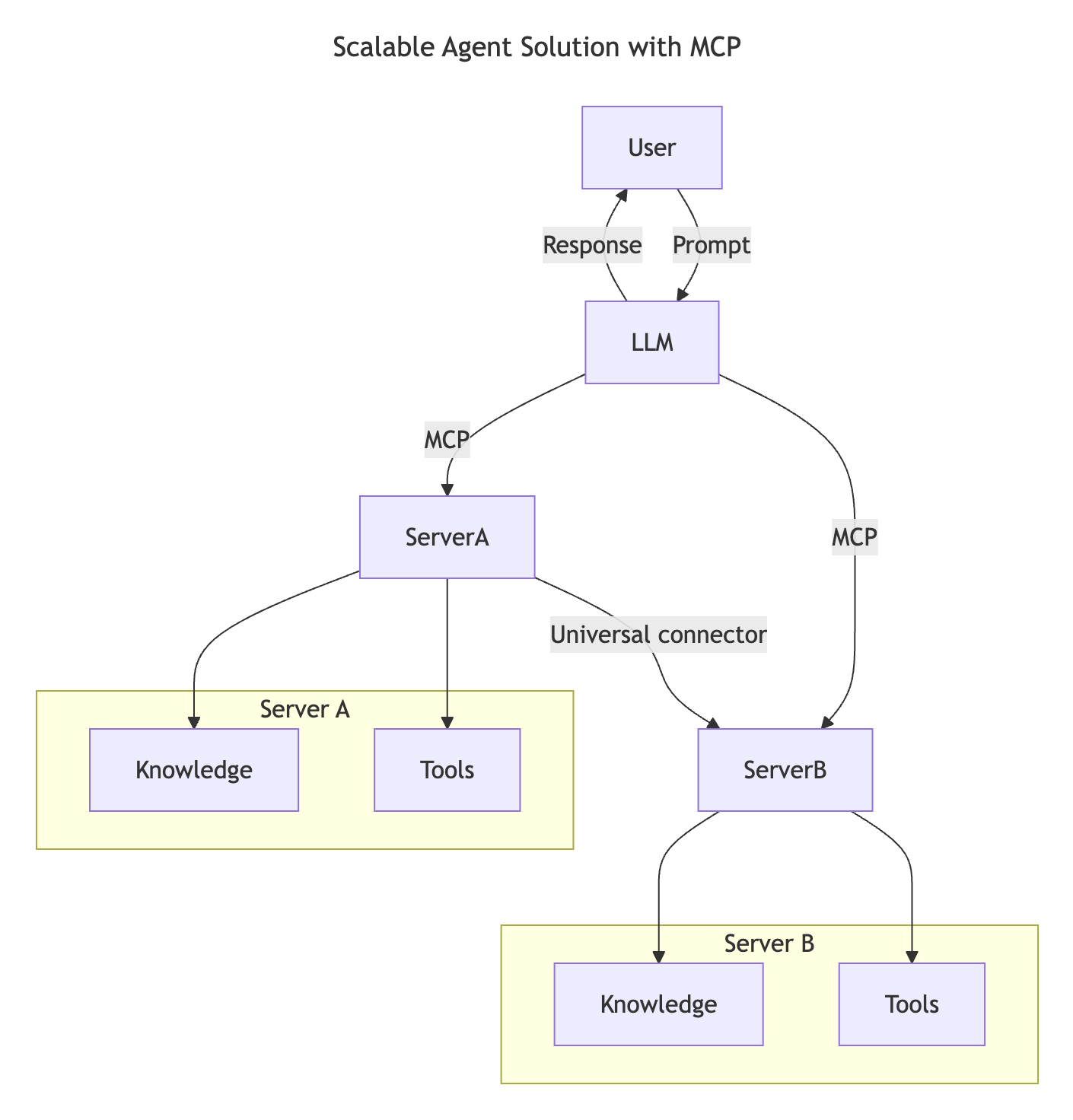

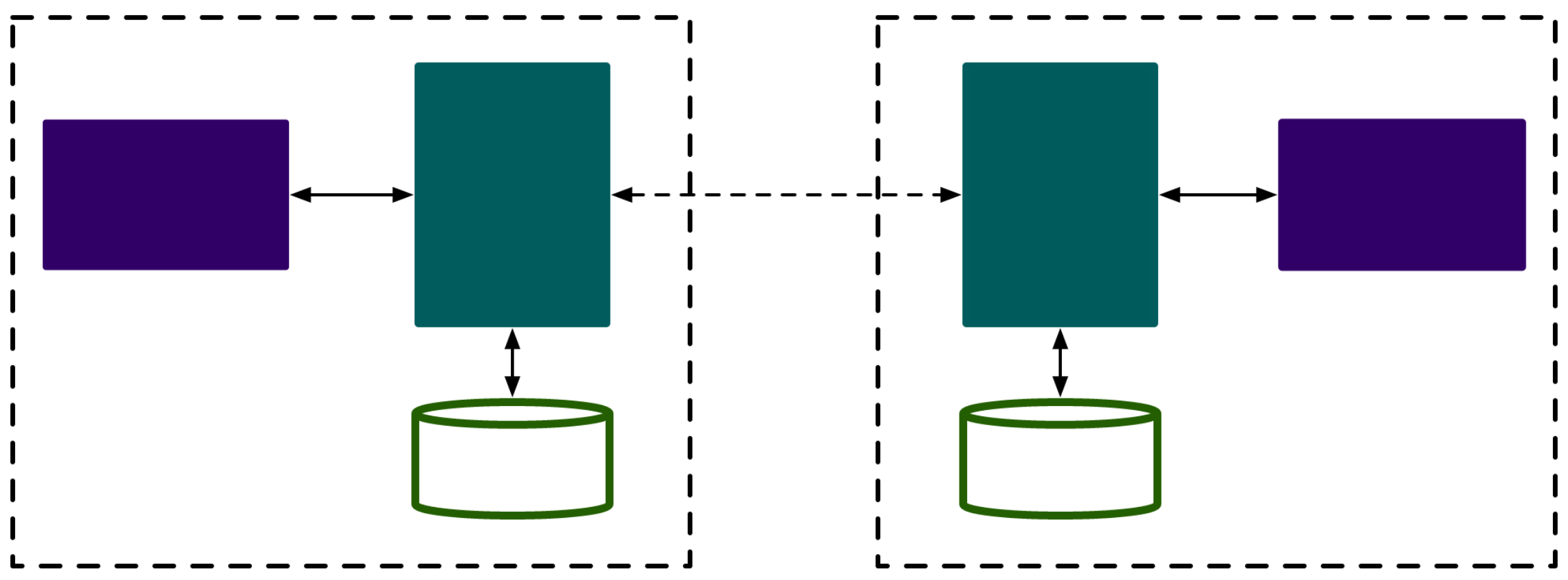

- This streamlined integration aligns with the flow depicted in the following diagram

- where servers provide both tools and knowledge, ensuring seamless collaboration across systems.

Example: Scalable Agent Solution

- The Universal Connector enables MCP servers to communicate and share capabilities with each other

- allowing ServerA to delegate tasks to ServerB or access its tools and knowledge.

- This federates tools and data across servers, supporting scalable and modular agent architectures.

- Because MCP standardizes tool exposure, agents can dynamically discover and route requests between servers without hardcoded integrations.

- Tool and knowledge federation: Tools and data can be accessed across servers, enabling more scalable and modular agentic architectures.

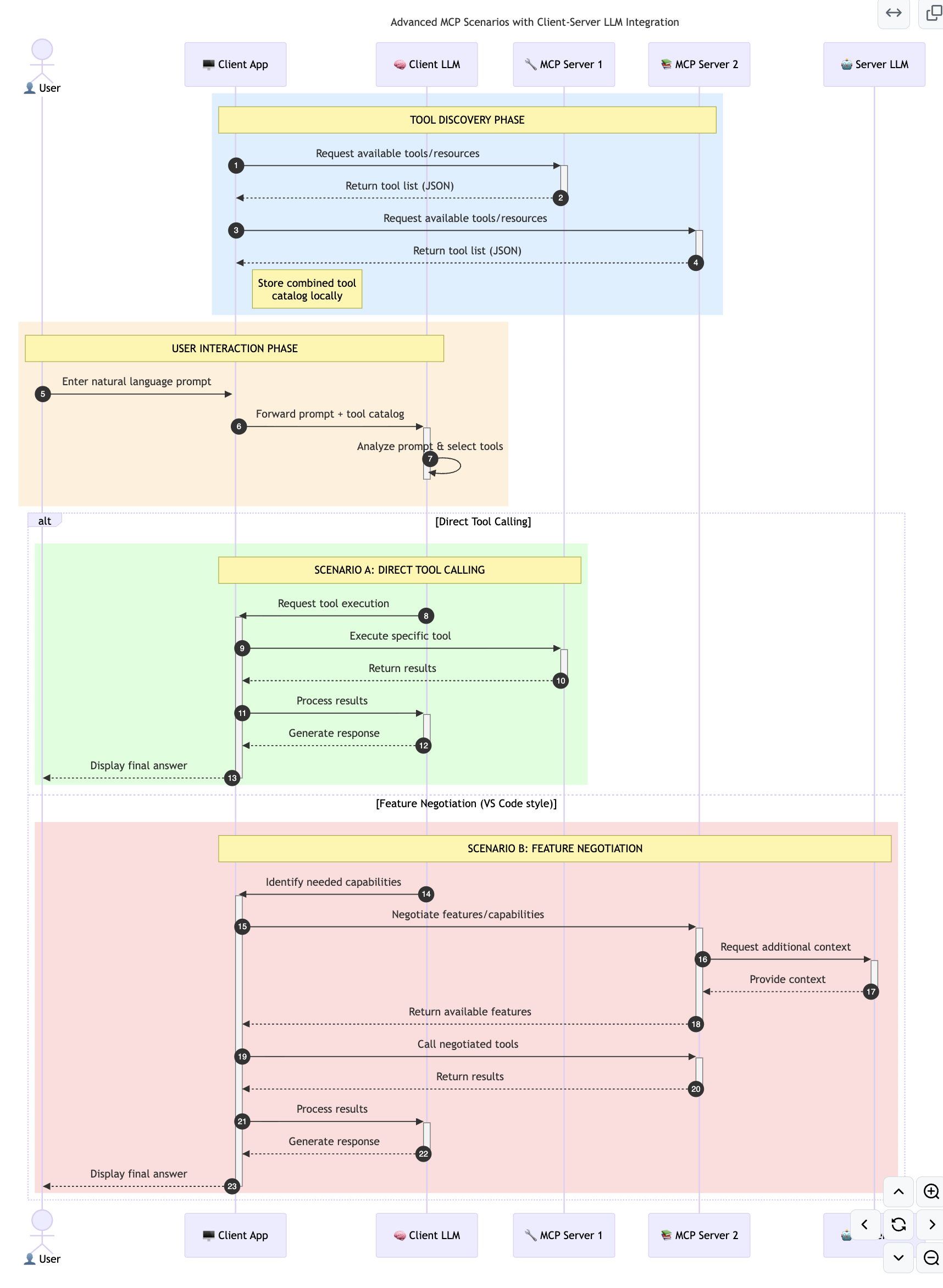

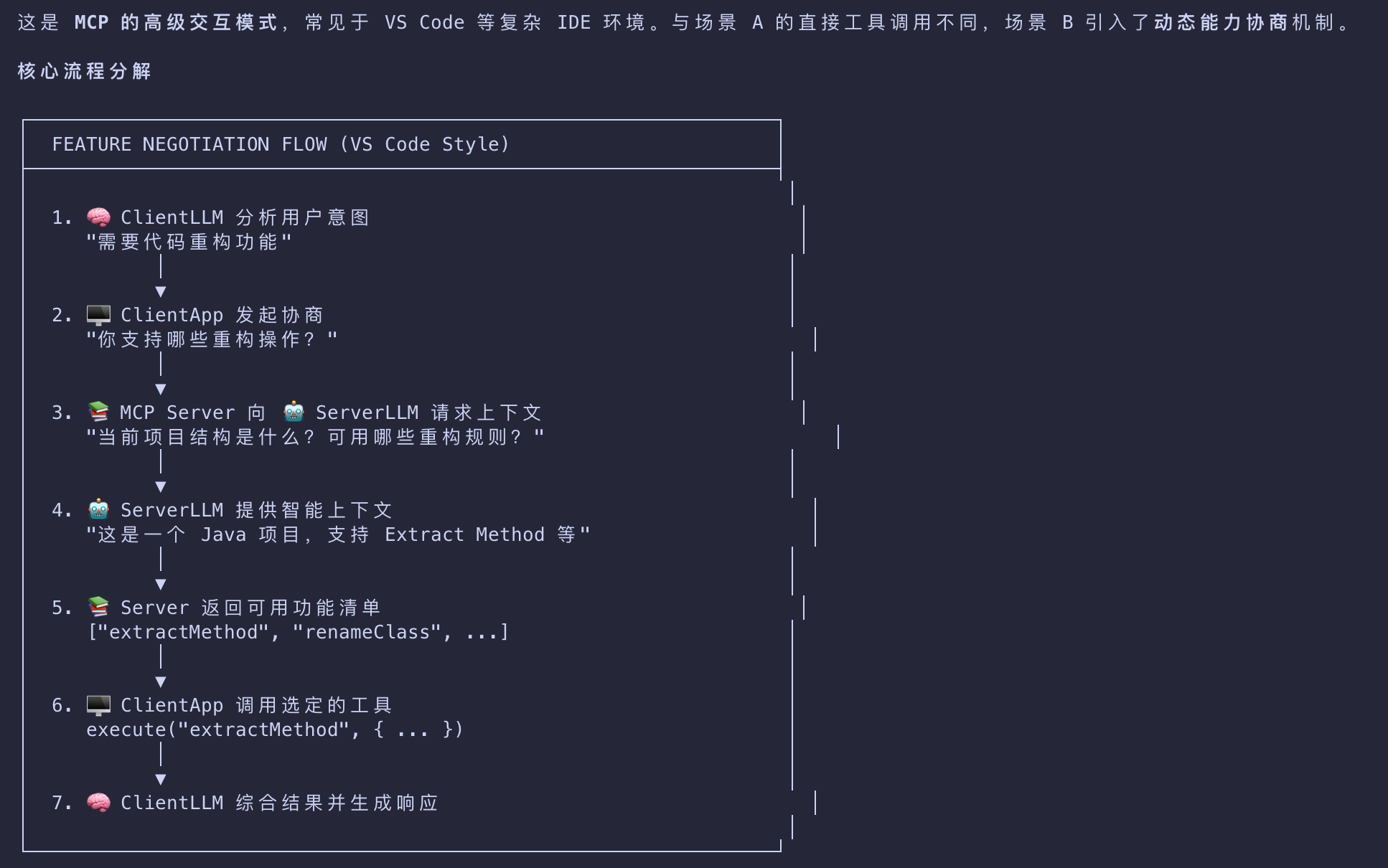

Advanced MCP Scenarios with Client-Side LLM Integration

- Beyond the basic MCP architecture

- there are advanced scenarios where both client and server contain LLMs, enabling more sophisticated interactions.

- In the following diagram, Client App could be an IDE with a number of MCP tools available for user by the LLM:

Practical Benefits of MCP

Here are the practical benefits of using MCP

| Benefit | Desc |

|---|---|

| Freshness | Models can access up-to-date information beyond their training data |

| Capability Extension | Models can leverage specialized tools for tasks they weren’t trained for |

| Reduced Hallucinations | External data sources provide factual grounding |

| Privacy | Sensitive data can stay within secure environments instead of being embedded in prompts |

Key Takeaways

The following are key takeaways for using MCP:

- MCP standardizes how AI models interact with tools and data

- Promotes extensibility, consistency, and interoperability

- MCP helps reduce development time, improve reliability, and extend model capabilities

- The client-server architecture enables flexible, extensible AI applications

Exercise

Think about an AI application you’re interested in building.

- Which external tools or data could enhance its capabilities?

- How might MCP make integration simpler and more reliable?

All articles on this blog are licensed under CC BY-NC-SA 4.0 unless otherwise stated.